HappyHorse on Hugging Face: Current Status and What to Expect

If you are searching for happyhorse huggingface model weights, the most important thing to know right now is that no verified public Hugging Face release has been confirmed yet.

What the HappyHorse Hugging Face Model Weights Status Looks Like Right Now

Verified status from currently available sources

The clearest takeaway from the currently available research is simple: HappyHorse, often referenced as Happy Horse 1.0, has not been verified as a public Hugging Face release. Multiple secondary sources repeat the same core point. One information-collection source states that “Happy Horse 1.0 has not yet been officially open-sourced,” and goes further by saying there are no model weights, no inference code, and no official GitHub repository confirmed. That is the strongest practical signal for anyone trying to use it today.

A separate guide says the weights are still not released and points people toward AI Video Arena blind testing instead of local deployment. That same source also repeats two details that show up often in discussions: the model is described as 15B, and full open-source was expected around April 10. Those details are interesting, but they do not change the current usability picture. A rumor about timing is not the same thing as a repository you can clone or a checkpoint you can download.

Another write-up frames HappyHorse-1.0 as a “mystery #1 AI video model” and says it still needs the basics before it becomes a real option: an actual GitHub repo, actual weights, and actual inference code. That wording matters because it separates benchmark hype from deployment reality. If the files and code are not there, the model is not ready for normal Hugging Face workflows.

There is also a report saying HappyHorse 1.0 appeared on the Artificial Analysis Video Arena in early April 2026 with no announcement, no named team, and no public weights. That lines up with the rest of the evidence: people can see references to the model in rankings and comparisons, but not in the form ML engineers need for testing, finetuning, or production experiments.

What has not been confirmed yet

HappyHorse / Happy Horse 1.0 is repeatedly described in current secondary sources as not officially open-sourced yet. Across the provided research, no verified Hugging Face model card, downloadable checkpoint, inference code, or official GitHub repository has been confirmed. That means there is no solid evidence yet of a real Hugging Face package you can trust for local use.

The missing pieces are specific and important. No source verifies a model card on Hugging Face. No source verifies checkpoint files. No source verifies runnable inference code. No source verifies a license. One collection page references happyhorses.io for official demo and updates, but that is still not proof of a Hugging Face distribution.

Several sources describe the project as pre-release, blind-test, or rumor-phase rather than a stable public release. That language is consistent across the material. You can treat that as a warning label: public attention is ahead of the public artifact set.

So if you are searching for happyhorse huggingface model weights, the safest assumption right now is that there is no official HF download available unless and until an official release actually appears. If a random listing turns up, treat it as unverified until it includes the normal release signals: clear ownership, files, code, and license. Right now, the evidence points to curiosity and demos, not a confirmed open-source drop.

How to Check Whether HappyHorse 1.0 Has Actually Appeared on Hugging Face

Fast verification checklist

When a Hugging Face page finally appears claiming to host HappyHorse 1.0, the fastest way to verify it is to check five things in order. First, does the page have a real model card with technical details instead of one vague sentence? Second, is the repository owner clearly tied to the project team or to an official announcement? Third, are downloadable weight files actually present? Fourth, are there runnable inference instructions? Fifth, is there a license that explains permitted use?

That checklist matters because unofficial uploads are common whenever a model gets buzz from leaderboards or blind tests. A renamed checkpoint, a placeholder repo, or a page that only links to screenshots is not a real release. For a video model, you need enough information to reproduce generation, not just enough branding to catch search traffic.

Start with repository identity. If the uploader has no connection to official project pages or public announcements, keep your guard up. The research specifically points to happyhorses.io as a location associated with official demo and updates, and it also mentions happy-horse.net as a concrete browser demo path. If a Hugging Face listing appears, compare the owner name, linked websites, and announcement language against those known locations.

Next, inspect the files tab. A trustworthy release should contain weight artifacts, not just README text. Look for checkpoint files, safetensors, shard files, config files, tokenizer or prompt formatting notes if needed, and generation scripts if the stack is custom. Since current sources specifically note that both weights and inference code are missing, a release that only includes one of those pieces is still incomplete.

Signals that a release is official

An official release usually carries several matching signals at once. The model card should explain what HappyHorse 1.0 is, what tasks it supports, and how to run it. The repository owner should match the project’s public identity. There should be usable download files, not empty placeholders. There should be example commands or notebooks showing inference. There should also be a license that tells you whether personal, research, or commercial usage is allowed.

Do not treat unofficial uploads, renamed checkpoints, or “coming soon” model pages as the real thing. A lot of pages can look polished while still offering nothing usable. For this model in particular, that risk is higher because current coverage is driven by ranking excitement and mystery-model attention. A fake or incomplete listing could spread fast just because people are searching aggressively.

Cross-check any Hugging Face page against official demo or update locations mentioned in the research, including happyhorses.io and public announcements. If the HF page is real, those channels should reference it directly or indirectly. If they stay silent while a random HF account claims to host the release, that is a red flag.

Also verify whether the listing includes actual weights and runnable code. The source set is explicit that both are currently missing, so any genuine status change should be obvious. If the page still lacks downloadable files, install steps, or a reproducible inference workflow, then the practical answer has not changed. For anyone tracking happyhorse huggingface model weights, the key test is not whether a page exists. It is whether the page lets you run the model end to end.

Where You Can Use HappyHorse Before Any HappyHorse Hugging Face Model Weights Release

Current access options

Right now, the most concrete path for trying HappyHorse is not Hugging Face at all. The research points to browser-based access, especially the demo at happy-horse.net, as the most straightforward option currently mentioned. There is also a reference to official demo and update locations at happyhorses.io. Those are the places worth checking first if the goal is to see outputs instead of waiting on repositories.

That browser-first reality matches what the other sources say about the project still being in a pre-release or blind-test phase. Instead of downloadable artifacts, access is being described through demo pages and ranking environments. That means you should approach HappyHorse as something you can evaluate from the outside for now, not something you can integrate deeply into a pipeline.

AI Video Arena or similar blind-test environments are also useful if you want to compare generation quality. One source specifically says the model’s weights are not yet released and recommends trying it through AI Video Arena blind testing instead. That is a practical route when you want side-by-side judgments on motion, prompt following, style consistency, or scene coherence without needing local files.

Best temporary workflow for testing

The best way to use the current demo phase productively is to act like you are building your own evaluation harness manually. Save every prompt you submit. Keep the exact text, any negative prompts if the interface supports them, and the date. Download or screen-record results when possible. Label outputs by prompt category: camera motion, human action, object interaction, lighting shifts, text rendering, and fast scene changes. That gives you a baseline you can compare later if public weights arrive.

A simple spreadsheet works well here. Create columns for prompt, seed if visible, duration, resolution, motion quality, temporal consistency, subject identity consistency, and artifacts. If you test through a blind arena, note which side you preferred and why. Over ten or twenty prompts, patterns become obvious. You will quickly see whether HappyHorse handles dynamic scenes better than competing systems or whether it mainly shines on a narrower set of prompts.

This approach is especially useful if you care about open source ai video generation model comparisons. Without local files, you cannot benchmark throughput or VRAM use, but you can still benchmark output behavior. That matters later when an official release shows up and you need to decide whether to invest time in setup.

Local deployment is not currently realistic without public weights and inference code. The sources are consistent on that point. No matter how tempting it is to search for mirrors or private dumps, there is no verified path in the research for a normal “clone repo, download weights, run script” workflow. For now, the smart temporary setup is browser demo plus disciplined testing notes. If the project eventually ships a proper release, you will already have a prompt suite ready for day-one evaluation.

What We Know About the Model: 15B Size, Rankings, and Release Rumors

Claims readers will see repeated online

A few claims about HappyHorse show up repeatedly, and it helps to sort them by confidence level. The first is model size: one guide describes HappyHorse as a 15B model. That figure is useful because it hints at likely hardware demands if the project eventually becomes downloadable, but it is still only as strong as the secondary source behind it. Until a model card or official docs appear, treat 15B as a reported specification, not a finalized one.

The second repeated claim is that HappyHorse is a top-ranked or mystery AI video model. One source literally calls it the “mystery #1 AI video model,” and another says it appeared on the Artificial Analysis Video Arena in early April 2026 with no announcement, no named team, and no public weights. That matches the unusual pattern many people have noticed: the model is talked about through rankings and arena appearances before it is talked about through release engineering.

A third commonly repeated claim is leaderboard performance. One article says HappyHorse beat Seedance 2.0 on Artificial Analysis and suggests open weights may arrive soon. That is definitely the kind of line that gets attention. It also explains why searches related to happyhorse 1.0 ai video generation model open source transformer keep growing. If a model beats a known competitor, people naturally expect a Hugging Face drop right behind it.

How to treat unverified timelines

The reported timeline rumor is that full open-source was expected around April 10. The important word there is expected. That date comes from a guide article, not from a verified official release announcement in the research provided. So it should be treated as an unverified expectation, not a guaranteed launch date.

That distinction matters because benchmark excitement can create a false sense of availability. A high ranking does not equal downloadable weights. An arena appearance does not equal an inference repo. Even a real 15B architecture claim does not tell you whether the release will support Diffusers, a custom pipeline, or a completely separate runtime.

The right way to read the current signal stack is this: there is enough smoke to believe HappyHorse is real and notable, but not enough release evidence to deploy it. Rankings are useful for deciding what to watch. They are not a substitute for weights, code, license, and documentation.

So if you are tracking an open source transformer video model or trying to decide whether to run ai video model locally in a future setup, keep those categories separate. Benchmarks answer “Is it interesting?” Deployment artifacts answer “Can I actually use it?” For HappyHorse today, the first bucket has a lot of noise and excitement. The second bucket is still empty based on the verified research.

What to Expect When HappyHorse Hugging Face Model Weights Finally Launch

Files and documentation to look for

When HappyHorse finally launches in a real, usable form, the release should include more than a single checkpoint file. At minimum, look for a complete model card, checkpoint files, inference examples, hardware guidance, prompt format instructions, version notes, and a clear license. If even one of those pieces is missing, expect friction.

The model card should explain supported tasks such as text-to-video and possibly image-to-video if that is part of the release. It should also specify output resolutions, frame counts or duration ranges, and any content or prompt limitations. For a video model, generation settings matter a lot, so details like guidance scale, sampling steps, scheduler expectations, or prompt template format should be documented somewhere obvious.

Checkpoint packaging matters too. A production-friendly release usually provides organized files, integrity-friendly formats like safetensors where applicable, and naming that makes versions easy to distinguish. If the first release has vague filenames, no changelog, and no explanation of what changed between revisions, plan for extra testing before trusting it.

Questions to answer before downloading

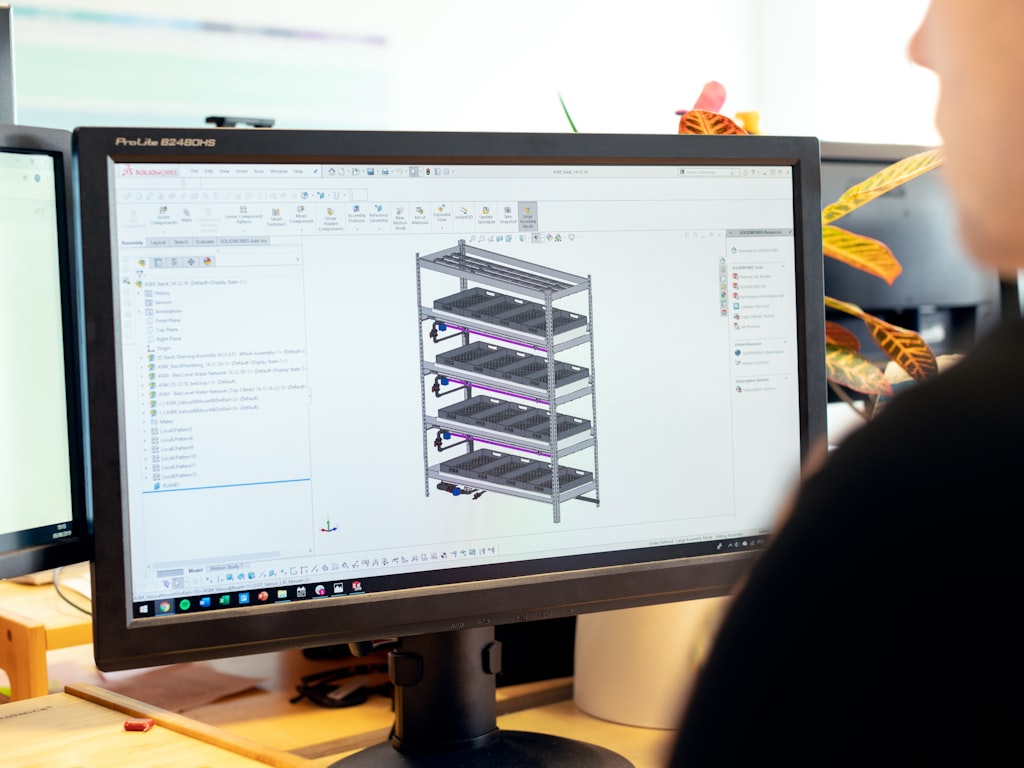

Compatibility is going to be one of the first real questions. People will want to know whether HappyHorse works with Transformers, Diffusers, or a custom inference stack. Right now, no verified support details exist in the research, so it is worth staying flexible. A lot of video releases end up requiring their own scripts, environment setup, and scheduler logic rather than dropping neatly into an existing pipeline.

Hardware guidance is another big one. If the model really is around 15B, the gap between “can technically load” and “can generate usefully” may be large. Look for VRAM recommendations, precision support, multi-GPU notes, CPU offload options, expected generation times, and whether the repo includes low-memory inference paths. Those details determine whether a release is practical for your workstation or only for larger cloud instances.

You should also check the open source ai model license commercial use terms before doing anything serious. Open-source availability does not automatically mean unrestricted business use. A release can be publicly downloadable while still restricting commercial deployment, derivative hosting, or redistribution. For product work, license clarity is just as important as benchmark quality.

Finally, watch for sample outputs, changelogs, and reproducibility details. Sample outputs let you compare official claims against your own prompt tests. Changelogs show whether the release is stable or moving fast. Reproducibility details, including seeds and parameter settings, tell you whether the team is giving you enough information to validate results. If those elements are present, the release is much more likely to be ready for real evaluation instead of just early curiosity. And if you are specifically waiting for happyhorse huggingface model weights, these are the signs that the launch is complete enough to matter.

Best Alternatives to Use Now If You Want an Open Source AI Video Generation Model

When to wait for HappyHorse

Waiting makes sense when your main goal is to evaluate HappyHorse specifically rather than to ship something immediately. If you are already excited by the 15B claim, the mystery-model momentum, or the report that it beat Seedance 2.0 on Artificial Analysis, keeping it on a watchlist is reasonable. The same goes if your current need is output comparison rather than local deployment. In that case, using the browser demo and arena-style tests can already tell you a lot.

Waiting also makes sense if you care deeply about this exact model’s eventual release profile. Maybe you want to see whether it lands as a serious open source transformer video model with strong docs and a permissive license. Maybe you want to know whether it supports an image to video open source model workflow in addition to text-to-video. Those are valid reasons to hold off before committing engineering time elsewhere.

When to choose another model today

If you need a working stack now, choose another open source ai video generation model today and revisit HappyHorse later. The decision becomes easy once the requirements are practical: downloadable weights, local run workflow, documentation quality, and license clarity. Those are exactly the areas where HappyHorse is still unverified.

Use a simple evaluation grid for alternatives. First, confirm that weights are downloadable from a trustworthy host. Second, confirm that the repo includes runnable inference steps with environment instructions. Third, check whether the model supports the mode you need: text-to-video, image-to-video, editing, or interpolation. Fourth, verify what hardware is required to run ai video model locally. Fifth, read the license carefully and decide whether it works for research, client work, or a commercial app.

This is also where related search intent matters. If you are searching for an image to video open source model, prioritize alternatives that explicitly support image conditioning and provide examples. If you are searching for run ai video model locally, prioritize mature repos with VRAM guidance, community troubleshooting, and active maintenance. If you care about open source ai model license commercial use, do not settle for vague README language; look for explicit terms.

A short decision framework helps. For active projects with deadlines, use current alternatives that already have weights, code, and a license. For exploration and model scouting, keep HappyHorse on a watchlist and monitor happy-horse.net, happyhorses.io, and any credible public announcement channels. The model may turn into a standout release, but right now deployment certainty belongs to alternatives that already publish the full package.

That is the clean split: use available open-source options for real work today, and keep HappyHorse in the “verify on release” bucket until an official Hugging Face listing, runnable code, and licensing details are all visible together.

Conclusion

The smartest way to handle HappyHorse right now is to separate what is exciting from what is usable. The exciting part is easy to see: references to a 15B model, strong ranking chatter, an Artificial Analysis presence, and reports that it may have beaten Seedance 2.0. The usable part is still missing in the verified research: no confirmed Hugging Face model card, no confirmed downloadable weights, no confirmed inference code, no confirmed official GitHub repository, and no confirmed license.

For now, treat browser access through happy-horse.net and official update references like happyhorses.io as the most concrete path for testing. Use that access well: save prompts, document outputs, compare motion quality, and build a reusable evaluation set. That puts you in a strong position when a release finally lands.

When any supposed release appears, verify every signal carefully. Check ownership, files, instructions, and licensing before trusting it. Once official HappyHorse Hugging Face model weights, code, and usage terms are actually published, you will be able to evaluate the model on real engineering criteria instead of rumor, screenshots, and leaderboard noise.