HappyHorse Text-to-Video: Prompting Guide and Best Results

If you want better HappyHorse videos on the first few tries, the fastest win is using a clear prompt structure instead of longer, more complicated wording.

How HappyHorse text to video prompting works

What HappyHorse can generate from text, images, and references

HappyHorse.AI is built around three workflows you can use immediately: Text to Video, Image to Video, and Reference to Video. On the product pages, HappyHorse says you can provide text or images and get cinema-grade videos with intelligent soundtracks, plus cinematic 1080p output, multi-shot storytelling, motion synthesis, and auto-generated soundtracks. That matters for prompting because you are not just describing a still frame. You are giving the system enough structure to decide what appears, how it moves, how the camera behaves, and what kind of sequence it becomes.

Text to Video is the cleanest place to start. You write a scene, and HappyHorse interprets it into motion, framing, and visual style. Image to Video works best when you already have a strong keyframe, product image, portrait, or concept art and want motion added around that look. Reference to Video is the most useful mode when text alone keeps drifting away from the character design, composition, color palette, or object shape you actually want. If your goal is consistency, especially for branded visuals or a specific hero subject, reference-driven generation usually saves time.

HappyHorse’s own examples show the kind of language it responds well to. The showcased prompts are not vague. They combine a subject, motion, and camera idea in one line: a semi-truck drifting into a robot transformation with an epic low-angle hero shot, plus sparks, smoke, and lens flares; a rapid arcing transition into a top-down animated map of Taipei 101; a sweeping claymation shot of the Hogwarts Express at twilight. Those examples tell you the model likes visible action, camera direction, and style cues that can be shown on screen.

What to expect from the two-model side-by-side workflow

One of the most useful parts of HappyHorse 1.0 is also one of the least obvious: when you submit a prompt or reference image, the system generates two outputs side by side from different unidentified models. You are not told which model made which result. That sounds minor, but in practice it changes how you should prompt.

Because both outputs come from different models, your first prompt should be easy to compare. If you overload it with five actions, three styles, and conflicting camera notes, it becomes hard to tell whether the problem was your wording or the model’s interpretation. Cleaner prompts make side-by-side comparison much more useful. You can quickly judge which output handled subject accuracy better, which one produced smoother motion, and which one followed your camera directions more faithfully.

That comparison loop is the real advantage. Instead of treating the first generation like a final answer, use it as a test. If one side nails the atmosphere but the motion is messy, keep the atmosphere wording and simplify the action. If one side respects your low-angle shot and the other ignores it, you now know your camera instruction is at least understandable and worth keeping. The first run is not about perfection. It is about writing a prompt that makes the differences between outputs obvious enough to refine.

HappyHorse also markets itself as free to start, with 10 credits and no subscription required on the HappyHorse 1.0 page, so it makes sense to use those early generations for structured testing rather than random experimentation. That is the core of effective happyhorse text to video prompting: make the prompt clear enough that you can actually evaluate what changed.

The best HappyHorse text to video prompting formula

A simple prompt structure for beginners

The best starting formula is simple: subject + setting + action + camera + style/constraints. That structure matches beginner-friendly prompting guidance for realistic text-to-video tools and lines up closely with the language HappyHorse uses in its own showcased examples.

Beginners usually get stronger results from shorter prompts because shorter prompts force clarity. If the scene has one main subject, one environment, and one readable action, the model has fewer chances to invent extra elements or blend mismatched ideas. Long prompts are not automatically smarter. They often create blurry priorities. When a prompt says “cinematic, realistic, anime, dreamy, chaotic, dramatic, epic, documentary-style” all at once, the output usually splits the difference badly.

A strong prompt also gives the subject a role and purpose. That does not mean writing a paragraph of backstory. It means making the scene’s focus obvious. “A firefighter walking through smoke toward a trapped doorway” is stronger than “a firefighter in a smoky environment” because the role and purpose tell the model what the subject is doing and why the movement matters. The same trick works for product shots, character shots, and stylized animation. Purpose creates direction.

The 5-part prompt template you can reuse

Use this five-part template every time:

1. Subject — who or what is the focus?

2. Setting — where is it happening?

3. Action — what visible movement happens?

4. Camera — how is it framed or moving?

5. Style/constraints — what visual look and limitations should apply?

A reusable base template:

[Subject] in [setting], [action]. [Camera direction]. [Style]. [Constraints].

Here are three versions you can copy and adapt.

Realistic template

A [subject] in [specific setting], [clear action]. [Camera shot and movement]. Realistic detail, natural motion, cinematic lighting. One main subject, consistent environment, smooth pacing.

Cinematic template

A [subject with role/purpose] in [specific setting], [dynamic action with one focal movement]. [Low-angle / tracking / wide / close-up camera direction]. Cinematic 1080p look, dramatic lighting, sparks, smoke, lens flares if appropriate. Keep the scene coherent and focused.

Stylized template

A [subject] in [setting], [simple readable action]. [Camera direction]. Stylized animation / claymation / diorama aesthetic, strong shapes, clean motion, consistent color palette, one main action.

A few prompt-building rules make this formula work better:

- Put the most important noun early.

- Name one environment, not three.

- Use visible verbs like walking, turning, drifting, reaching, approaching, transforming.

- Pick one dominant camera move.

- Add constraints that reduce confusion: one main subject, consistent background, slow pacing, no extra characters if needed.

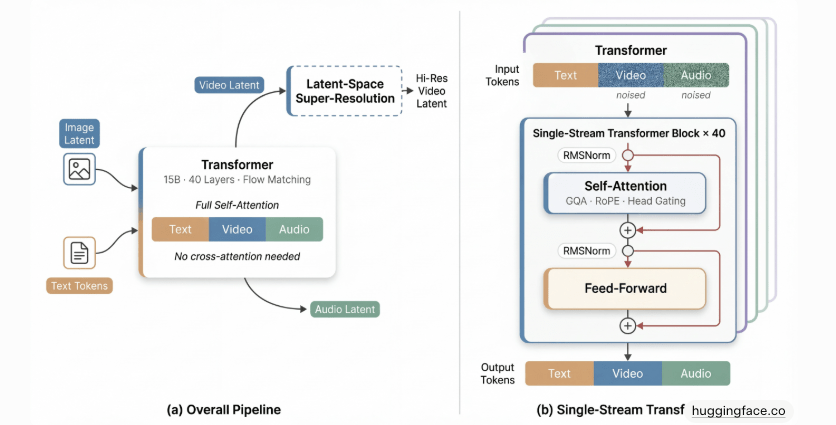

If you are coming from searches around terms like happyhorse 1.0 ai video generation model open source transformer, open source ai video generation model, or run ai video model locally, remember HappyHorse prompting behaves more like directing a scene than tweaking a model stack. Even if you know an open source transformer video model or an image to video open source model, the practical win here is not complexity. It is clean scene design.

For reliable happyhorse text to video prompting, this five-part structure is the fastest repeatable method.

HappyHorse text to video prompting examples for better results

Short prompts that work

Short prompts can work extremely well when they stay specific. Here are direct examples you can paste and adapt.

Realistic street scene

A woman in a red raincoat walks through a neon-lit alley at night, reflections on wet pavement. Slow tracking shot from behind. Realistic cinematic lighting, one subject, steady motion.

Nature shot

A white horse runs across a foggy field at sunrise. Wide shot with a gentle side pan. Realistic, soft golden light, natural motion, calm pacing.

Product shot

A silver wristwatch rotating on a black reflective pedestal. Close-up shot with a slow push-in. Luxury commercial style, sharp highlights, clean background, smooth motion.

Stylized animation

A tiny bakery on a snowy street, warm light glowing from the windows as a baker opens the door. Slow top-down tilt into a front view. Claymation style, cozy textures, gentle motion.

These work because each prompt has one clear subject, one readable action, and one camera instruction.

Detailed cinematic prompts inspired by HappyHorse examples

HappyHorse’s own examples lean into cinematic action and stylized transitions, so here are stronger versions in that spirit.

Cinematic action

A futuristic semi-truck speeds down a desert highway at dusk, drifting hard into a stop before transforming into a giant robot. Epic low-angle hero shot, then a slow upward tilt as metal panels shift. Sparks, smoke, and lens flares, cinematic 1080p, dramatic sunset lighting, one focal subject.

Top-down transition scene

A sleek drone flies toward Taipei 101 at blue hour, city lights glowing below. Rapid arcing transition into a top-down animated map view centered on the tower. Clean motion synthesis, cinematic city atmosphere, smooth transition, no extra focal subjects.

Claymation fantasy look

The Hogwarts Express rolls through a miniature countryside at twilight, steam rising as it crosses a small bridge. Sweeping claymation shot from the side, then a gentle push-in toward the engine. Handmade textures, warm lantern light, stylized smoke, diorama scale, smooth pacing.

Product hero reveal

A black sports car sits in a dark studio as narrow light strips sweep across the bodywork. Low-angle close-up tracking along the front grille, then a wide reveal. Glossy reflections, cinematic contrast, subtle smoke, premium commercial look, one car only.

Weak prompt vs improved prompt is where the difference becomes obvious.

Weak prompt

A robot truck transforming dramatically in a cool cinematic way.

Improved prompt

A red semi-truck skids sideways on a desert road at dusk, then transforms into a towering robot while dust kicks up around the wheels. Epic low-angle hero shot with a slight orbit around the transformation. Sparks, smoke, lens flares, cinematic lighting, one clear subject, smooth motion.

What changed? The improved version adds a precise subject, a setting, a visible action sequence, a camera behavior, and visual effects that fit the scene.

Here are reference-driven prompts for the platform’s other workflows.

Reference to Video: character

Use the uploaded reference image of the armored knight. Keep the same armor design, silver-blue palette, and front chest emblem. The knight turns slowly toward camera in a torch-lit stone hallway. Medium shot with a slow push-in. Cinematic realism, consistent face and costume, no extra characters.

Reference to Video: product

Use the uploaded sneaker image as the exact design reference. The shoe rotates on a white studio platform while the camera circles slightly from a low angle. Clean commercial lighting, realistic material detail, minimal background, smooth motion.

Good happyhorse text to video prompting examples do not sound poetic for the sake of it. They sound direct, filmable, and easy for the model to stage.

How to control motion, camera, and style in HappyHorse text to video prompting

Motion words that create cleaner video action

Motion language is one of the biggest quality levers in video prompting. If the action is vague, the video often turns vague too. The most useful verbs are the ones you can clearly imagine on screen: walking, running, turning, drifting, approaching, reaching, circling, gliding, panning, zooming, arcing.

For cleaner output, use one main action and, at most, one secondary transition. “A dancer spins slowly on stage” is easier to render well than “a dancer spins, jumps, falls, laughs, points, and teleports through changing environments.” HappyHorse’s showcased prompts reinforce this. The truck drifts and transforms. The camera makes a rapid arcing transition. The claymation train gets a sweeping shot. The action verbs are visible and controllable.

A few reliable motion phrases:

- walks slowly toward camera

- turns to face the viewer

- drifts into a stop

- smoke rising gently

- camera glides past

- slow push-in

- wide pan across the scene

- arc around the subject

When motion gets messy, simplify the verb first. Replace “moves dynamically” with “walks forward.” Replace “epic action” with “runs through smoke.” Visible language beats abstract hype.

Camera directions and visual style cues to include

Camera instructions should be concrete and limited. The clearest phrases are:

- low-angle shot

- tracking shot

- top-down view

- close-up

- wide shot

- slow push-in

- over-the-shoulder shot

- gentle orbit

- side pan

Use one main shot type and one movement. For example: “Close-up with a slow push-in” or “Wide shot with a gentle side pan.” If you ask for a close-up, top-down, low-angle, drone shot, and handheld feel all in one line, the output will often split or ignore parts of the request.

Style cues work best when they describe a single visual lane. HappyHorse clearly supports cinematic looks, stylized animation, and claymation-inspired scenes based on its examples. Useful style phrases include:

- realistic detail

- cinematic lighting

- dramatic contrast

- claymation textures

- stylized animation

- diorama look

- soft golden-hour light

- sparks, smoke, lens flares

- clean commercial studio lighting

Constraints are just as important as style. Add them when you want reliability:

- one main subject

- consistent environment

- smooth motion

- slow pacing

- no extra characters

- minimal background clutter

- keep the same design throughout

This matters whether you usually work with hosted tools or compare systems against an open source ai video generation model, check an open source ai model license commercial use, or test an image to video open source model. HappyHorse responds best when the visual request is tightly bounded. One subject, one action, one camera idea, one style direction. That combination gives the system enough guidance to create strong motion without guessing too much.

A practical HappyHorse text to video prompting workflow to improve every generation

What to do on your first prompt

Start with the simplest version of the scene that still contains all five core parts: subject, setting, action, camera, and style/constraints. Do not begin with your “ultimate” mega-prompt. Begin with a prompt that is easy to judge in the two-output comparison.

A strong first test might look like this:

A chef in a stainless-steel kitchen flips vegetables in a frying pan. Medium shot with a slow push-in. Realistic cinematic lighting, one subject, smooth motion, consistent environment.

That first generation should answer basic questions fast: Does the chef look right? Is the pan-flip readable? Does the camera stay coherent? Does the kitchen remain consistent? Does the soundtrack fit the mood if HappyHorse auto-generates one?

Because HappyHorse generates two side-by-side outputs from hidden models, score both versions on the same criteria every time:

- Subject accuracy: does the main subject match the prompt?

- Motion smoothness: does the action flow naturally?

- Camera coherence: does the framing behave as requested?

- Scene consistency: do objects and background stay stable?

- Soundtrack fit: does the audio feel aligned with the scene?

How to revise prompts after comparing both outputs

After the first generation, keep what worked and change only one variable at a time. This is the fastest way to learn which wording actually improved the result.

If the subject is correct but the motion is weak, revise the action only.

If the motion is good but the framing is generic, revise the camera only.

If both outputs look visually off-brand, revise the style and constraints only.

Example revision path:

Prompt 1

A black motorcycle rides through a rainy city street at night. Wide shot with a slow pan. Realistic cinematic lighting, one rider, smooth motion.

Problem: subject is good, but motion feels flat.

Prompt 2

A black motorcycle accelerates through a rainy city street at night, water spraying from the tires. Low-angle tracking shot from the side. Realistic cinematic lighting, one rider, smooth motion, consistent street environment.

Now the action is more visible and the camera has a stronger purpose.

When text alone does not preserve the design or composition, switch workflows. Use Reference to Video if the face, outfit, product shape, or image composition keeps drifting. Use Image to Video if you already have a still image that nails the look and just need movement added.

A practical iteration checklist:

- Wrong subject? Add clearer nouns and key visual traits.

- Messy action? Reduce to one visible verb.

- Bad camera? Specify one shot type and one movement.

- Style drift? Name one style and remove conflicting aesthetics.

- Inconsistent scene? Add “consistent environment” and “one main subject.”

- Design not preserved? Move to reference-based generation.

This workflow is the real productivity trick in happyhorse text to video prompting. Simple first prompt, side-by-side comparison, one-variable revision, then reference mode when text stops holding the look.

Common HappyHorse text to video prompting mistakes and quick fixes

Why prompts fail

Most failed prompts break for the same reasons: too many actions, conflicting styles, vague camera language, and too much text without clear priority. A prompt like “A warrior running, jumping, flying, exploding through a fantasy cyberpunk medieval anime realistic city with dramatic cute horror vibes and every camera angle” gives the model no stable center. It is overloaded before generation even starts.

Another common mistake is abstract wording. “Make it epic” is not useless, but by itself it is weak. The model needs visible instructions. Epic compared to what? A low-angle hero shot? Sparks and smoke? A wide reveal after a close-up? Concrete visual choices outperform mood-only language almost every time.

Camera confusion is another major cause of generic outputs. If you do not specify a shot, the system picks one. Sometimes that is fine, but if you want a strong result quickly, direct it. “Low-angle close-up with a slow push-in” is actionable. “Cool camera” is not.

Long prompts can also fail because they bury the main idea. Beginners often assume more words mean more control. In practice, simpler prompts often outperform complex ones when you are testing a new concept, especially in a tool that already gives you two outputs for comparison.

Fast fixes for inconsistent or generic results

Use these fix patterns immediately:

-

Replace abstract wording with visible action.

Bad: dramatic scene

Better: a firefighter walks through thick smoke toward a glowing doorway -

Narrow the scene to one focal subject.

Bad: a busy city full of dozens of people doing different things

Better: a cyclist rides through a crowded market, camera focused only on the rider -

Specify one camera move.

Bad: dynamic cinematic camera

Better: tracking shot from the side with a slow push-in at the end -

Remove conflicting style tags.

Bad: realistic anime claymation documentary fantasy

Better: claymation style with warm twilight lighting -

Add constraints for stability.

Better add-ons: one main subject, consistent environment, smooth motion, no extra characters

A compact best-practices list:

- Start short.

- Use the five-part formula.

- Give the subject a role and purpose.

- Use visible motion verbs.

- Choose one camera setup.

- Pick one dominant style.

- Compare both outputs for motion and consistency.

- Revise one variable at a time.

- Use reference mode when design fidelity matters more than text flexibility.

The strongest HappyHorse generations usually come from simple direction, not prompt clutter. Clear visual intent beats complicated wording almost every time.

Conclusion

The fastest path to stronger HappyHorse videos is not writing longer prompts. It is building cleaner ones. When you structure every prompt around subject, setting, action, camera, and style/constraints, HappyHorse has a much better chance of delivering a usable result on the first few generations.

That matters even more because HappyHorse 1.0 gives you two outputs side by side from different hidden models. Use that comparison intentionally. Start simple, check subject accuracy, motion smoothness, camera coherence, scene consistency, and soundtrack fit, then revise only one element at a time. If the look keeps drifting, switch to Image to Video or Reference to Video instead of fighting the text prompt.

The best results come from clear visual direction: one subject, one main action, one camera move, and one consistent style. That is the practical core of happyhorse text to video prompting—simple prompt formula, specific cinematic cues, and fast side-by-side iteration until the scene locks in.