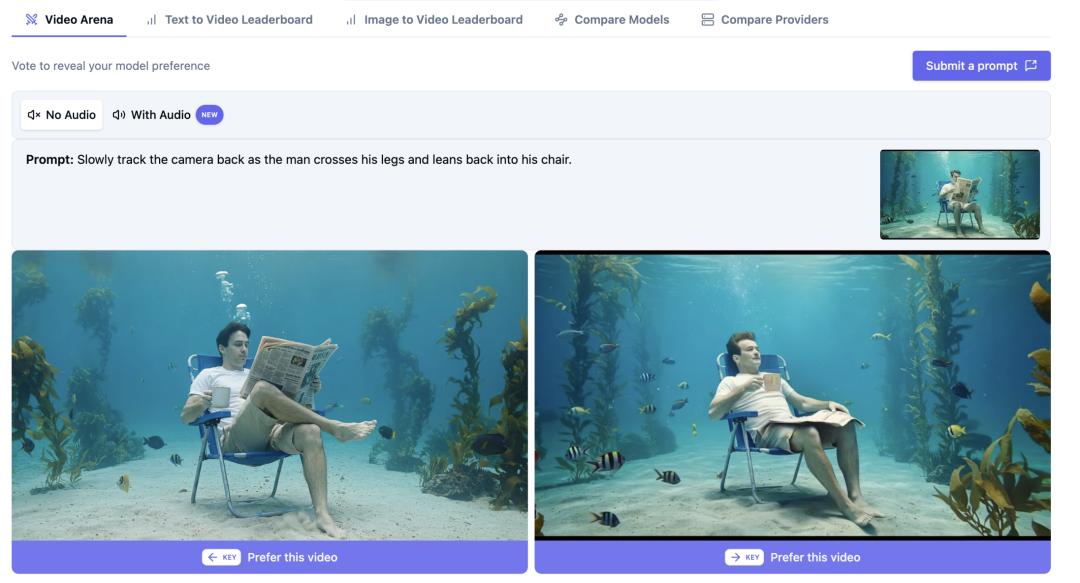

HappyHorse vs LTX Video 2.3: Parameters, Speed, and Quality Compared

If you’re choosing between HappyHorse and LTX Video 2.3, the real question is which model gives you the best mix of usable quality, generation speed, and workflow fit for your type of video.

HappyHorse vs LTX Video 2.3 at a Glance

What each model is designed to do

The quickest way to frame this comparison is simple: LTX Video 2.3 is the more documented tool right now, while HappyHorse is the more talked-about wildcard. If you need hard facts before you commit time or budget, LTX gives you more to work with immediately. If you’re tracking emerging models and want to test an alternative that has strong market buzz, HappyHorse is worth keeping on your bench.

LTX-2.3 is described as a DiT-based audio-video foundation model that generates synchronized video and audio inside one model. That matters in practice because it can reduce handoffs between separate video generation, sound design, and sync tools. If you are building short clips, talking scenes, or quick social-ready assets, integrated audio can save a real editing pass.

HappyHorse has strong attention around it, including reports that it topped an AI video ranking and triggered open-versus-closed-source discussion. The catch is that publicly verified technical detail is still thinner in the currently available research. That means fewer confirmed specifics on parameters, less clarity on exact export ceilings, and less confidence around production planning unless you test it yourself.

For most real projects, the comparison comes down to five things: parameters and feature access, generation speed, output consistency, motion quality, and licensing or workflow fit. Those are the pressure points that decide whether a model is fun to experiment with or actually dependable enough to use every week.

The fastest way to decide which one fits your project

Here’s the practical shortcut. Pick LTX Video 2.3 first if you care most about audio support, want to run an AI video model locally, or need fast iterations for short-form clips. Community comparisons describe it as faster, capable of producing more frames, and better overall than some alternatives, even if consistency still needs work. That combination makes it attractive when your process depends on trying a lot of prompts quickly.

Put HappyHorse on the shortlist if your goal is to compare emerging quality leaders in the open source ai video generation model space, or if you think its visual style may suit your use case better. Just verify the basics first: available resolutions, clip length, export settings, licensing terms, and whether what you can access matches the hype.

A clean decision rule helps. If you prioritize synchronized audio, local workflow, and quick output for Reels or TikTok, start with LTX 2.3. If you prioritize testing alternatives and want to see whether HappyHorse delivers more predictable visual consistency in your own prompts, test it side by side before building around it.

That is really the heart of happyhorse vs ltx video: LTX is the stronger documented choice today, while HappyHorse remains a model you should evaluate with hands-on tests rather than assumptions.

HappyHorse vs LTX Video Parameters and Core Features

Resolution, clip length, and access limits

The biggest feature caveat around LTX Video 2.3 is not just what the model can do in theory, but what you can actually access. One research note claims the desktop version only exposes “LTX Fast,” described as a distilled version, with up to 1080p output and 5-second clips. That is enough for many social edits, motion tests, product loops, and short inserts, but it is still a meaningful ceiling if you want longer scenes or broader control.

That same note also suggests stronger LTX variants or better features may sit behind API access. From a workflow angle, that changes the buying decision completely. A creator testing the desktop build may think the model tops out at a certain quality or duration, while an API customer may be evaluating a stronger tier. Before you compare results online, check whether the examples were generated from desktop LTX Fast or from a paid endpoint.

HappyHorse is harder to pin down on the same metrics. Current research does not offer equally concrete, verified specs on output resolution, clip duration, or access tiers. If you are deciding between tools for production use, that lack of parameter transparency is not a minor detail. It affects storage planning, edit timeline setup, shot design, and whether you can count on repeatable exports.

A practical move is to build a simple comparison sheet before you commit. Log maximum resolution, average usable clip length, export options, generation queue behavior, and whether there are hidden differences between free, desktop, and API tiers. That spreadsheet will tell you more than a marketing page.

Audio generation and model architecture

LTX 2.3’s clearest differentiator is architecture and feature integration. It is described as a DiT-based audio-video foundation model that can generate synchronized video and audio in one model. If you usually create scenes that need environmental sound, speech-like timing, or rhythmic motion, this is not just a checkbox feature. It can simplify your stack and reduce sync mismatches during editing.

That makes LTX 2.3 especially relevant for anyone comparing an image to video open source model pipeline against a more unified workflow. Instead of generating visuals, exporting, then layering and syncing sound externally, you can test whether one system gets you close enough faster. For short ads, mood clips, social teasers, and demo reels, that speed difference can outweigh minor quality gaps.

HappyHorse may still compete on visual output, but the current research does not verify an equivalent synchronized audio feature. It also does not clearly establish whether HappyHorse should be categorized with confidence as a happyhorse 1.0 ai video generation model open source transformer option, or whether access and licensing are more constrained. Until those points are clearer, the safe move is to verify them directly in docs, repos, or official product pages before integrating it into a repeatable workflow.

If your work depends on confirmed capabilities, LTX gives you a stronger checklist today: known 1080p and 5-second desktop limits, known discussion around API-gated upgrades, and a clearly stated audio-video generation advantage. HappyHorse may still surprise you, but it currently asks for more validation upfront.

HappyHorse vs LTX Video Speed: Local Use, API Access, and Workflow

How generation speed affects daily production

Speed matters more than most spec sheets admit. The difference between a model that returns results quickly and one that drags every iteration is the difference between testing 20 ideas in a session and testing four. Community feedback around LTX 2.3 consistently points in one direction: it is seen as faster and able to produce more frames, which makes it attractive for iteration-heavy workflows.

That frame count point is easy to underestimate. More frames usually means smoother motion experiments, better timing options for edits, and fewer awkward gaps when you are trying to cut to music or build a loop. If you are producing product spins, quick facial reactions, stylized B-roll, or hook-heavy social clips, those gains show up immediately in your timeline.

LTX speed also pairs well with a practical truth about short-form content: top-end quality is only part of the job. Reels and TikTok-style content often lives or dies on fast testing, clean exporting, and the ability to rework a prompt quickly after seeing what failed. Research on short-form posting workflows also points out that external apps often produce better results than relying only on native platform tools. That means your generation model should fit a fast edit-export-post chain, not just generate a pretty test clip.

When local setup matters more than raw model quality

LTX 2.3 has another advantage that can matter just as much as speed: signs of active local-use adoption. Setup and tutorial content around the model presents it as a local AI video generator with full setup guidance. If you want to run ai video model locally, that ecosystem matters. It means more install walkthroughs, more prompt experiments to learn from, and usually faster troubleshooting when a build breaks.

That local angle changes the value equation. A model that is slightly weaker on paper but easy to run and iterate locally can be more productive than a stronger remote model with slower queues, stricter limits, or expensive API calls. The tradeoff with LTX is that the desktop experience may be limited to LTX Fast, while potentially better variants sit behind paid API access. So the real choice is not only model versus model, but local convenience versus premium access.

For short-form creators, this becomes very straightforward. If your output is 5 to 15 seconds after editing, desktop LTX limits may be completely acceptable because your real priority is fast batch testing. You can generate multiple 5-second candidates, cut them together externally, add overlays, and post. Since short social videos often benefit from external editing anyway, a simple local workflow can beat a “better” model that slows you down.

HappyHorse could still be the right fit if its quality proves stronger in your tests, but until access paths and performance are better documented, it is harder to forecast daily production speed. For anyone balancing prompt volume, edit turnaround, and post schedule, that uncertainty is a real cost.

HappyHorse vs LTX Video Quality: Motion, Consistency, and Real-World Output

Where LTX 2.3 looks strong

On quality, LTX 2.3 gets a mixed but useful report card. Community comparisons describe it as better overall, with sound support, faster generation, and more frames standing out as clear strengths. In real use, those strengths often translate into clips that are easier to shape into finished posts because you are not solving audio separately and you have more motion material to work with.

That does not automatically mean every individual shot looks cleaner. What it means is that LTX often gives you a more complete package for practical output. If you are creating short scenes with atmosphere, quick transitions, or stylized motion where synchronized sound adds value, LTX 2.3 can feel more production-ready than a video-only system. It is especially strong as an experimental tool when you want to test scene concepts fast and decide later which shots are worth polishing.

This is where happyhorse vs ltx video gets interesting. HappyHorse has strong attention in the market, but less publicly verified quality data in the notes available here. Without comparable benchmarks or consistent access details, it is harder to make a confident quality-first case for HappyHorse beyond “test it and see.” That does not make it weak. It just means LTX currently has the better-documented strengths.

Where visual artifacts can appear

LTX’s biggest quality warning is inconsistency. Community feedback says it can produce excellent clips one prompt and weaker clips the next, even when the prompt structure is similar. That matters a lot if you need shot-to-shot continuity, repeatable style for campaigns, or reliable avatar behavior. Fast generation loses some value if you have to reroll heavily to get a stable result.

The specific artifact reports are also worth taking seriously. A Facebook comparison notes movement glitches and smearing, even in close-up shots. The same warning suggests those issues may get worse in long shots or full-body compositions. That tracks with what many motion models struggle with: once more of the body is visible and there are more moving parts to coordinate, temporal errors become easier to spot.

You can turn that into a practical testing plan. Start with close-up or medium shots before you trust a model on wide movement. Keep motion intensity moderate on your first passes. If you need a subject walking, turning, gesturing, and interacting with props, test each variable separately before combining them. For polished output, camera distance is not a style choice alone; it is a stability control.

Scene complexity also matters. Dense backgrounds, reflective surfaces, multiple moving limbs, and fast camera movement can amplify glitches. If you are evaluating either model for client work, use a repeatable grid: close-up face motion, medium torso motion, full-body movement, object interaction, and a scene with layered background detail. That tells you whether the model fails gracefully or falls apart when you scale complexity.

For final-use judgment, do not ask which model creates the prettiest single cherry-picked clip. Ask which one holds together across ten prompts with the same production target. That is the quality metric that actually saves time.

How to Choose Between HappyHorse and LTX Video for Different Use Cases

Best pick for social clips, demos, and short scenes

If you are making social clips, short demos, product teasers, or fast-moving scene tests, LTX 2.3 is the safer first pick. The reasons are concrete: integrated audio-video generation, reports of faster output, more frames, and visible momentum around local setup. Those are exactly the features that help when you need to go from idea to editable asset quickly.

This matters even more for Reels and TikTok workflows. In practice, those formats reward speed, hook density, and simple editing. Research around short-form posting also suggests that external apps often deliver better presentation than relying on platform-native tools alone, and that videos can present differently across platforms. So a model that gives you quick exports and easy handoff into your normal edit app is often more useful than one with a longer feature list but slower iteration.

LTX’s desktop limits of 1080p and 5-second clips are not ideal for every job, but they can be perfectly workable here. You can stitch short clips, add captions, layer music, and shape the final output externally. For social-first production, that is often faster than waiting for a model that promises more but slows the loop.

HappyHorse is still worth testing if you are tracking alternatives in the open source transformer video model space and want to see whether its visual style lands better for your prompts. Just do not assume fit based on hype alone. Verify exports, generation speed, and cleanup needs with your own short-form scenarios.

Best pick for commercial and repeatable workflows

Commercial work raises the bar. Repeatability, licensing clarity, and post-production overhead matter as much as visual quality. LTX-related sources note that LTX-2 and WAN 2.2 are available with clear licensing in the open-model landscape. That is useful if you need to evaluate open source ai model license commercial use questions before moving a project into production.

HappyHorse is less clear on that front in the available notes. One source asks how HappyHorse fits into the commercial-use landscape, but the snippet does not verify the answer. That means anyone considering it for paid work should confirm the license directly, not infer it from rankings or discussion threads.

For repeatable workflows, also think about consistency costs. If LTX gives you speed but requires more rerolls due to inconsistency, the value can still be good for experimental content but weaker for campaign work with matched shots. If HappyHorse proves more stable in your own tests, that stability could outweigh weaker documentation. The only way to know is to run identical commercial-style prompts through both and log retries, usable takes, and edit cleanup time.

So the split is straightforward. Choose LTX 2.3 when you need fast experimentation, local-friendly usage, and integrated audio-video generation. Keep HappyHorse in play when you want to evaluate new contenders among open source ai video generation model options and are willing to verify access, quality, and licensing before betting a pipeline on it.

HappyHorse vs LTX Video: A Practical Testing Checklist Before You Commit

What to test in your first 10 prompts

Before paying for API access, building automations, or standardizing a pipeline, run a disciplined side-by-side test. Use the same ten prompts, the same target aspect ratio, and the same output goal for both models. That is the fastest way to cut through marketing noise.

Start with resolution and clip duration. Confirm whether desktop LTX is capped at 1080p and 5 seconds in your environment, and check whether any premium or API route changes that. With HappyHorse, verify actual export settings rather than advertised capability. If your final delivery is 9:16 social, test native vertical handling or your preferred crop strategy immediately.

Then test audio needs. Since LTX 2.3 is described as generating synchronized video and audio in one model, see whether the audio is genuinely useful or just a novelty layer. Use one prompt with rhythm, one with ambient mood, and one with dialogue-like timing if supported. If HappyHorse requires external audio every time, calculate that extra edit step into your workflow.

Prompt adherence is next. Run one product shot, one talking avatar, one cinematic movement scene, one stylized action shot, and one simple close-up. Track whether the subject, setting, camera direction, and motion instructions actually appear. Good-looking output is not enough if it ignores the prompt.

Questions to answer before paying for API or building a pipeline

Motion stability needs its own pass. Test close-up versus full-body performance because reported LTX issues include smearing and movement glitches, with warnings that longer or full-body shots may look worse. Use one medium close-up with gentle motion and one wide shot with walking or turning. If a model breaks only in wide action, that still may be acceptable depending on your use case.

Also measure consistency across retries. Generate the same prompt three times and compare subject identity, scene coherence, and motion quality. This is critical because LTX has been described as better overall but inconsistent. A model that nails one out of three attempts may still be useful for ideation, but much less useful for client delivery.

Do not skip export usability. Check whether files import cleanly into your editor, whether frame pacing feels stable, and whether the codec or container creates avoidable friction. For short-form workflows, simple exports often matter more than one extra visual feature.

Finally, compare desktop and API or premium outputs whenever possible. If access tiers change quality significantly, you need to know before you judge the model. The same goes for licensing. Confirm whether each option allows your intended usage, especially if revenue or client delivery is involved.

A strong final decision usually comes down to four direct-use criteria: speed per iteration, consistency across retries, licensing clarity, and how much cleanup the output needs in post. If one model wins three of those four for your actual prompt set, you have your answer.

Conclusion

For most people choosing between these two today, LTX Video 2.3 is the stronger documented option. It has a clearer feature story, including synchronized audio-video generation in one model, active signals around local use, and repeated reports of faster generation with more frames. If you want to run ai video model locally, experiment quickly, and produce short clips without stitching together too many separate tools, LTX is the easier place to start.

The tradeoff is that LTX is not perfectly stable. Community reports point to inconsistency, plus movement glitches and smearing that can show up even in close-ups and may get worse in wider or full-body shots. Desktop access may also be limited to LTX Fast, with 1080p and 5-second clips, while stronger options may sit behind API access. That means you should evaluate the exact tier you plan to use, not just the model name.

HappyHorse is still worth testing, especially if you want another emerging alternative in the open source ai video generation model race. It has clear market attention, but the current research offers less verified detail on parameters, access, and commercial-use position. That makes direct testing essential before you adopt it for anything repeatable.

If you want the clearest decision framework, use this: choose LTX Video 2.3 when speed, audio, and local experimentation are your priorities. Choose HappyHorse only after it proves, in your own prompts and your own workflow, that its access, quality, and licensing match what you need to ship real work.