HappyHorse vs PixVerse V6: Elo Scores, Pricing, and Real Output Quality

If you are choosing between HappyHorse and PixVerse V6, the smartest comparison is not just who ranks higher on Elo, but which model gives you the best-looking output for your budget, workflow, and use case.

HappyHorse vs PixVerse V6 at a glance: what actually matters

The three comparison points to check first

For a useful happyhorse vs pixverse v6 decision, start with three things you can actually act on: output quality, turnaround speed, and cost per minute. That sounds obvious, but it cuts through a lot of hype fast. A model can look amazing on a leaderboard and still be a bad fit if it is slow to iterate, expensive to rerun, or hard to access when you need to ship clips quickly.

Artificial Analysis is the cleanest neutral framework for this because its Video Model Comparisons track quality Elo, speed, and pricing across text-to-video, image-to-video, and audio-enabled video models. That matters because most real projects are not judged on one axis. If you are building ad variants, speed and per-minute cost can matter as much as raw beauty. If you are testing visual storytelling, quality may dominate. If you are working from stills, image-to-video behavior can matter more than text-only rankings.

A practical way to use those metrics is simple. For quality, ask which model creates the strongest first result without obvious motion artifacts. For speed, check how long it takes to get a generation back when you are iterating multiple prompts. For cost, estimate the price of getting one actually usable clip, not just the listed price of one generation. A cheap model that needs five reruns is not cheap in practice.

Why Elo alone is not enough

Artificial Analysis quality Elo is useful because it comes from blind preference voting in Video Arena. That makes it more meaningful than random marketing claims. People are comparing outputs without being told which model made them, so the score becomes a good signal for broad human preference. If one model consistently wins those head-to-head comparisons, that tells you something real.

But Elo is still a proxy, not a production guarantee. A higher Elo does not automatically mean better results for every workflow. A model that wins blind votes on punchy visual impact may still struggle with continuity between shots, prompt precision, or preserving a product image in a short-form ad. Another model may lose some blind preference battles while still being easier to steer for specific commercial tasks.

That distinction matters even more if you are tracking terms like happyhorse 1.0 ai video generation model open source transformer, open source ai video generation model, or open source transformer video model. Those queries usually come from people who care about more than visual ranking. They care about how a model fits into a real pipeline: whether it supports image-to-video, whether it might become an image to video open source model, whether they can eventually run ai video model locally, and whether there is an open source ai model license commercial use path that makes sense. None of that is captured by Elo alone.

So the right comparison frame is: use Elo to estimate broad viewer preference, then test speed, cost, and workflow fit before betting production time or money.

HappyHorse vs PixVerse V6 on Elo scores and benchmark signals

What the benchmark-style signals say

The strongest benchmark-style signal in this matchup is not a complete official public table, but a reported comparative claim. A source titled “HappyHorse model decryption: A complete analysis of the AI video dark…” says that in the non-audio category, HappyHorse 1.0 outperformed top-tier models like Seedance 2.0 720p, Kling 3.0, and PixVerse V6. That is a striking claim because it places HappyHorse directly above serious competition, not just mid-tier models.

That reported result lines up with why people are paying attention. If a model can beat PixVerse V6 on non-audio quality, it deserves immediate testing. But it is important to frame this correctly: this is a cited claim from a secondary source snippet, not independently verified benchmark data with a full methodology table in front of us. Treat it as a high-interest signal, not as final proof.

Artificial Analysis gives the more stable framework for interpreting any ranking claim. Its Elo-style methodology uses blind preference voting through Video Arena, which is a strong way to compare how outputs land with human viewers. If a model rises in that system, it usually means people genuinely prefer what it is producing across many comparisons. That is exactly why Elo remains valuable even when individual benchmark claims are still being debated.

How much confidence to place in current claims

There is also a community signal that pushed HappyHorse into the spotlight fast. A Reddit thread in r/AtlasCloudAI carried the title: “Anyone know HappyHorse? How come it just came out of nowhere and beat seedance2.0.” That tells you two things immediately. First, the model appeared suddenly enough to feel like a surprise entrant. Second, users were already framing it as a possible quality disruptor relative to Seedance 2.0.

Still, Reddit is anecdotal. It is useful for spotting momentum, not for replacing controlled comparison. The safest reading is that HappyHorse has attracted attention because multiple signals point toward unusually strong visual performance, especially in non-audio output. That makes it worth serious side-by-side testing, but not blind adoption.

A practical takeaway here is to put HappyHorse in the “potentially stronger quality contender” bucket and PixVerse V6 in the “proven top-tier value contender” bucket. If you are making a purchase or tool choice today, do not commit on hype alone. Run the same prompt in both systems, compare the clips blind if possible, and then measure whether the visual advantage is large enough to matter in your actual deliverables.

That is the right way to read the current benchmark signals: HappyHorse may have an edge in visual quality based on reported findings, while PixVerse V6 remains easier to anchor because it is already widely discussed in top-tier comparisons and pricing conversations. Use benchmark-style signals to shortlist, then verify with your own prompts before you lock a workflow.

HappyHorse vs PixVerse V6 on real output quality: where benchmark wins can fail

When viewer preference matches production needs

Elo works best when your goal overlaps with what blind viewers naturally prefer. If you are chasing instant visual punch, strong composition, and eye-catching motion, a model with better preference performance often does translate into better practical results. That is why Elo is such a useful starting point. It captures what humans tend to like when they see two clips side by side without branding bias.

For short standalone clips, this can be enough. If your use case is a single hero shot, a moody fantasy scene, or a striking product reveal, the model that wins more blind votes may also be the model you prefer in production. In that context, the reported claim that HappyHorse 1.0 outperformed top-tier models including PixVerse V6 in the non-audio category becomes especially relevant. If your project depends mostly on visual impact and not on dialogue, long continuity, or complex editing consistency, that reported edge could matter.

When real-world output quality matters more than rank

Where benchmark wins often fail is in repeatability and task fit. A clip that wins a blind vote can still be harder to use in a real project. Marketers, creators, and editors should test both models on the exact same prompt and judge four practical factors: motion consistency, prompt adherence, style control, and scene usability. These are the details that determine whether a clip survives beyond the wow moment.

Motion consistency means checking for flicker, warped limbs, drifting objects, or unstable backgrounds. Prompt adherence means verifying whether the subject, action, camera movement, and mood actually match what you asked for. Style control matters when you need a clean brand look, a specific cinematic tone, or consistency across multiple deliverables. Scene usability is the final filter: can you actually cut this shot into a finished sequence without hiding obvious defects?

This is where the distinction between benchmark performance and real use cases becomes critical. A model may rank well in viewer preference while still underperforming on TikTok ad variants that need readable product framing. Another may be better for cinematic shots with dramatic lighting. A third may be less flashy but easier to use in long-form sequences where continuity matters more than immediate visual impact.

The most reliable workflow is simple. Run identical text-to-video or image-to-video prompts in both models. Compare the first-shot quality, then count how many generations it takes to get one clip you would actually publish. If Model A looks slightly better but needs four reruns and Model B looks nearly as good on the first try, Model B may be the real winner for production.

That is the heart of real output quality in happyhorse vs pixverse v6: Elo gives you a strong starting signal, but your best model is the one that gives you usable footage faster and more consistently for your exact kind of project.

PixVerse V6 vs HappyHorse on pricing, value, and accessibility

Why PixVerse V6 is the budget benchmark right now

The clearest pricing fact in this comparison comes from WaveSpeedAI, which describes PixVerse V6 as the cheapest per minute in the top tier. That is a big deal because top-tier quality only matters if you can afford enough iterations to reach a finished result. If you generate ad variants, test multiple hooks, or rerun prompts to tighten motion, low per-minute cost compounds into a real advantage very quickly.

That pricing position makes PixVerse V6 the safer recommendation for cost-sensitive users right now. If you need strong visuals without paying premium rates, PixVerse V6 gives you an efficient way to stay in the top tier while controlling budget. This matters even more if your workflow depends on volume. Ten experiments at a lower top-tier rate can beat three experiments on a more expensive or less accessible model, especially when the final improvement is uncertain.

Artificial Analysis helps here because it does not isolate price from performance. It compares quality, speed, and pricing together, which is exactly how value should be judged. A cheaper model is only a better deal if the quality stays competitive and the turnaround supports your pace of work. PixVerse V6 appears strong on that balance.

What could change if HappyHorse opens up

WaveSpeedAI also adds the key caveat: if HappyHorse releases weights or an API in the coming weeks, the calculus changes. That one detail matters a lot for accessibility. A model can have excellent reported visual quality, but if you cannot reliably access it in your workflow, it is not yet a practical primary tool.

Accessibility includes more than simple signup availability. It affects automation, integration, experimentation volume, and future control. People searching for an open source ai video generation model, open source transformer video model, or image to video open source model are usually trying to understand whether a model can fit into a more controllable stack later on. The same goes for people asking whether they can run ai video model locally or whether there is an open source ai model license commercial use option. At the moment, the research notes only support one concrete accessibility takeaway: HappyHorse becoming available through released weights or an API would significantly change adoption dynamics.

So the practical read is straightforward. Today, PixVerse V6 has the stronger visible value case because it combines top-tier status with the cheapest per-minute pricing in that tier. HappyHorse could disrupt that equation if broader access arrives. Until then, accessibility remains part of the decision, not a side issue. The best-looking model on paper is only useful if you can actually get it into your day-to-day production flow without friction.

Which model to choose for your workflow: beginner, marketer, or quality-first creator

Best pick for first-time AI video creators

For a first-time creator, PixVerse V6 is the more practical starting point right now. The reason is not just price by itself. It is the combination of top-tier positioning and a clearer comparison path through Artificial Analysis metrics like quality, speed, and pricing. When you are still learning prompt structure, scene control, and how many reruns a concept usually needs, lower per-minute cost gives you more room to experiment without burning budget too fast.

That makes PixVerse V6 easier to learn on. You can test text-to-video ideas, compare image-to-video behavior, and build intuition for what changes actually improve clips. If you are also watching the space for a happyhorse 1.0 ai video generation model open source transformer angle or hoping for a future open source ai video generation model release, that is worth monitoring, but it should not stop you from choosing the tool that is more practical today.

Best pick for short-form ad production

For freelance marketers and performance creatives, the decision comes down to speed plus quality. Ad work rarely ends at one generation. You need hook variations, product angle changes, alternate aspect ratios, and often multiple visual styles for testing. Artificial Analysis explicitly compares speed alongside quality and pricing, which is exactly the right lens for this workflow.

HappyHorse is interesting here because the reported non-audio outperformance over top-tier models including PixVerse V6 suggests it may deliver stronger-looking visuals for polished ad clips. If your campaign depends on one premium-looking centerpiece video, that matters. But PixVerse V6 still has the major practical advantage for ad iteration because the cheapest top-tier per-minute cost supports more testing. In paid social, quantity of viable variants often matters as much as peak beauty.

So for short-form ad production, the safer default is PixVerse V6 when you need many shots and fast iteration. Test HappyHorse if the campaign rewards exceptional visual polish and you can tolerate extra friction or uncertain access.

Best pick for pure visual quality testing

If your goal is to chase the strongest possible non-audio visual output, HappyHorse deserves first-pass testing. The reported benchmark claim that it outperformed PixVerse V6, Kling 3.0, and Seedance 2.0 720p in the non-audio category is too strong to ignore. Add the Reddit attention suggesting it surprised users by possibly beating Seedance 2.0, and you have enough signal to justify direct testing.

That does not mean automatic adoption. It means if you are quality-first, you should put HappyHorse in your prompt lab and compare it against PixVerse V6 with the same concepts. For pure visual hunting, a model that occasionally produces clearly superior shots can be worth the extra effort.

The clean rule is this: match the model to the job. Budget, accessibility, and repeatability currently favor PixVerse V6. Experimental quality hunting may favor HappyHorse. In a real happyhorse vs pixverse v6 choice, the winning model is the one that matches how you actually produce.

How to run a fair HappyHorse vs PixVerse V6 test before you commit

A simple side-by-side testing checklist

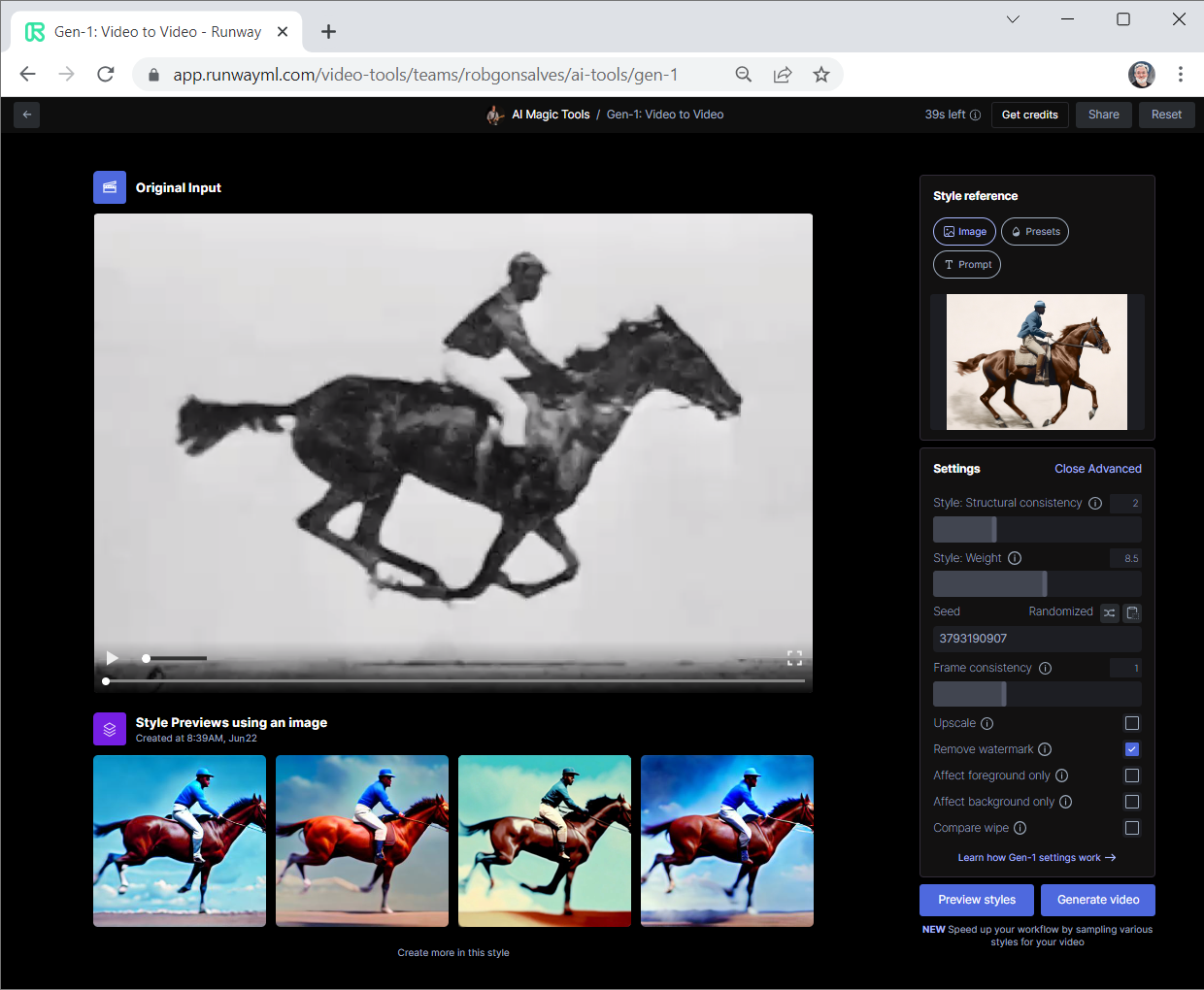

The fairest test is brutally simple: keep everything the same except the model. Use the same prompt, aspect ratio, duration, and input type across both systems. If one run is text-to-video, keep the other text-to-video. If one run starts from a reference frame, keep the other image-to-video with the exact same source image. Otherwise, you are measuring setup differences instead of model differences.

Run at least three prompt types. First, use a cinematic scene with clear motion, like a slow dolly shot through neon rain. Second, use a commercial prompt, such as a product rotating on a clean branded background. Third, use a character-driven shot with facial movement or body motion. This mix reveals whether a model is broadly strong or only impressive in one style.

Do separate comparisons for text-to-video and image-to-video because Artificial Analysis compares across multiple generation modes, and some models behave very differently depending on how much visual guidance they receive. A model that looks average in text-to-video can become much stronger once you give it a reference image.

What to record in your comparison

Record four practical metrics. First, do a blind preference check. Rename the clips so you do not know which model made which output, then pick the one you would actually publish. Second, note generation speed. A beautiful result that takes too long can wreck an iterative workflow. Third, calculate cost per usable clip, not just cost per minute. If one model is cheap but needs many reruns, your true cost rises fast. Fourth, count rerun frequency. How often does each model need another attempt before it gives you something clean enough to use?

Also write down specific failure modes. Did the camera drift? Did objects melt? Did the subject stop matching the prompt? Did the style suddenly shift mid-shot? Those notes matter more than vague impressions because they tell you whether a model is failing in ways your project can tolerate or in ways that break the shot.

If you are hoping for future flexibility around an image to video open source model, the ability to run ai video model locally, or an open source ai model license commercial use scenario, keep a separate note on access and integration. Those are future-facing factors, but they matter if you are building a pipeline rather than just generating one-off clips.

Use a decision rule that is easy to apply immediately. Pick PixVerse V6 if it gives you predictable value: solid quality, manageable speed, and lower cost per usable result. Choose HappyHorse only if your side-by-side test shows a clear visual gain that is large enough to outweigh any cost or access limitations. That keeps the choice grounded in outputs you can actually use, not just rankings or hype.

Conclusion

The real answer to happyhorse vs pixverse v6 is not a single universal winner. PixVerse V6 has the strongest current case for value because WaveSpeedAI describes it as the cheapest per minute in the top tier, and that makes it the safer pick when budget, iteration volume, and accessibility matter most. Artificial Analysis strengthens that case by giving you a neutral way to compare quality, speed, and pricing across video models instead of guessing from isolated demos.

HappyHorse is the more intriguing quality play. The reported claim that HappyHorse 1.0 outperformed top-tier models including PixVerse V6 in the non-audio category is exactly the kind of signal that justifies direct testing. Add the sudden Reddit attention around it potentially beating Seedance 2.0, and it is clear why so many people want to know whether it is the next visual quality leader. But those signals are still best treated as prompts to test, not as reasons to commit blindly.

If you want lower cost and smoother adoption today, PixVerse V6 is the practical move. If you are willing to experiment for a possible edge in raw visual quality, HappyHorse is worth putting through a controlled side-by-side test. The best choice comes down to what matters more in your workflow: predictable value now, or the chance to capture better-looking non-audio output if HappyHorse really delivers on the early signals.