Kling 3.0 (Kuaishou): Features, API, and Pricing Guide

If you want to use Kling 3.0 effectively, the fastest path is to understand what it does best, how to access it, what the API supports, and when it makes more sense than open source video models.

What Kling 3.0 (Kuaishou) Is and When to Use This Video Model

Core capabilities of Kling 3.0

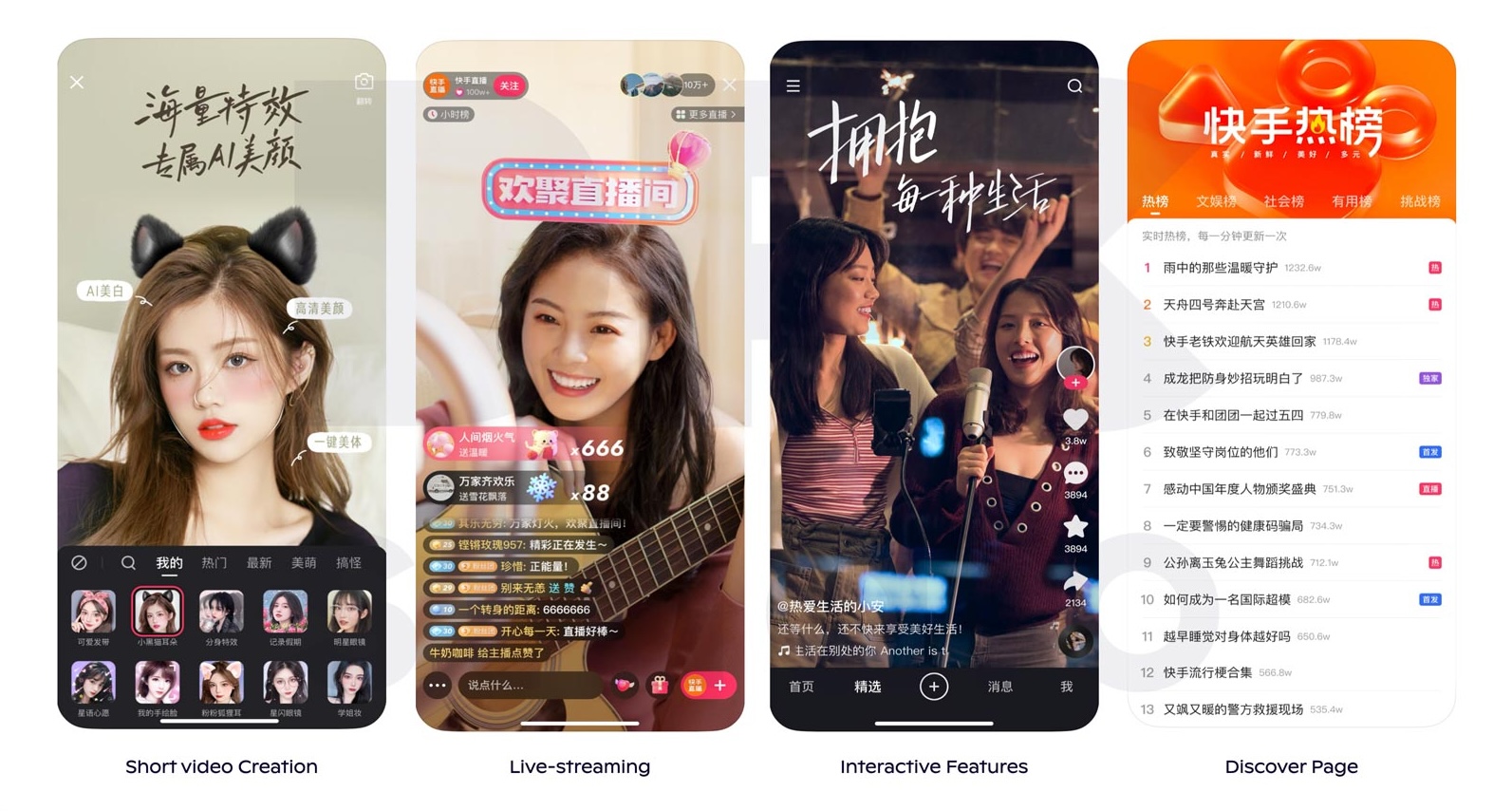

Kling 3.0 is Kuaishou’s AI video generation model, built by the same company behind one of China’s biggest short-video platforms. That matters because Kling was clearly designed for practical video output rather than just research demos. In day-to-day use, it sits in the hosted-model category alongside other polished commercial generators: you give it a prompt or reference image, wait for the render queue, and get a ready-to-edit clip without setting up GPUs, dependencies, or inference pipelines.

The reason creators keep checking Kling is simple: it aims for strong motion quality, cinematic framing, and easier workflows than a typical self-hosted stack. Instead of spending half a day trying to run an open source ai video generation model on limited VRAM, you can often get to a usable clip with a prompt, a style direction, and one or two reruns. For teams shipping ads, vertical social clips, concept visuals, or product scenes, that speed is often the real advantage.

Kling 3.0’s core workflows usually center on text-to-video and image-to-video generation. Text-to-video is the faster option when you want ideation, mood exploration, or a first-pass scene from a written concept. Image-to-video is where things become more controlled: you start from a keyframe, product shot, character portrait, or storyboard frame and ask Kling to animate it while preserving layout and identity more reliably than pure prompt-based generation.

Best use cases for text-to-video and image-to-video

Use text-to-video when you need broad exploration: “luxury skincare bottle on wet black stone, dramatic rim light, slow dolly-in, water droplets moving naturally, premium ad aesthetic” is the kind of prompt that gives you a fast concept clip. Use image-to-video when the exact bottle, exact character face, or exact art direction matters. If you already have a still image that is close to final, Kling can be a strong production shortcut.

This is where a kling 3.0 kuaishou video model guide becomes useful: the hosted experience is often better than local models when you care about speed, visual polish, and not babysitting infrastructure. Choose Kling over an image to video open source model when you need smoother motion out of the box, less setup friction, and faster collaboration with nontechnical teammates. Choose it over a model you run yourself when hardware is your bottleneck or when deployment overhead would slow the project more than generation cost.

Before starting, check the limitations that usually matter most. Access may vary by region or platform availability. Generation length can be capped by product tier or preset. Queue times may fluctuate during peak demand, especially on shared hosted systems. Output controls may be strong in some areas, like camera language and style prompting, but lighter in others, such as frame-exact editing or advanced timeline composition. If your workflow needs long-form sequences, strict shot continuity, or guaranteed deterministic outputs across dozens of clips, test those constraints immediately instead of assuming they will behave like a full VFX pipeline.

Kling 3.0 Kuaishou Video Model Guide to Features and Output Controls

Prompting options that affect results

The most useful Kling 3.0 features are the ones that improve first-pass usability: prompt adherence, visual realism, motion smoothness, strong image reference handling, and the ability to regenerate or revise a clip without rebuilding the whole concept from scratch. In practice, prompt quality drives more of the outcome than people expect. Short, dense prompts tend to work better than sprawling paragraphs because they reduce competing instructions.

A reliable structure is: subject + environment + action + camera + lighting + style. For example: “athletic woman in red windbreaker running through a neon-lit city alley at night, light rain, handheld tracking shot, realistic motion blur, cinematic contrast, high-detail urban reflections.” That format gives Kling clear priorities. If the result is too chaotic, trim adjectives before adding more. If the motion feels weak, strengthen the action verb and camera direction rather than restating style terms.

Prompt patterns vary by use case. For ads: “close-up product hero shot, premium commercial lighting, slow turntable movement, condensation beads, shallow depth of field.” For product demos: “clean studio background, product centered, smooth orbit camera, feature callout pacing, minimal distraction.” For social clips: “vertical framing, energetic movement, fast reveal, vibrant colors, creator-style authenticity.” For cinematic scenes: “wide establishing shot, controlled dolly, atmospheric haze, natural cloth simulation, dramatic backlight.” For character-driven shots: “medium close-up, subtle facial emotion, slight head turn, soft eye focus, consistent wardrobe details.”

Resolution, duration, camera movement, and scene control

The generation settings that matter most are aspect ratio, clip length, style presets, seed behavior if exposed, and camera instructions. Aspect ratio should be decided before prompting. If you want TikTok, Reels, or Shorts output, prompt for vertical composition from the start. Cropping a horizontal generation later often wrecks the subject framing. Clip length affects ambition: a short 3–5 second sequence with one clear action usually performs better than a longer clip packed with multiple changes.

Camera language can dramatically improve outputs. Terms like “slow dolly-in,” “locked-off tripod,” “aerial descending shot,” “over-the-shoulder tracking,” and “gentle orbit” tend to be more useful than vague phrases like “make it dynamic.” For scene control, stick to one main subject and one dominant action per shot. If you ask for “crowd dancing, fireworks, drone move, costume changes, sunrise transition, cinematic reveal,” you are inviting artifacts.

For consistency across multiple generations, reuse a stable prompt spine and change only one variable at a time. Keep the subject descriptor identical, preserve wardrobe and color cues, and use the same image reference whenever possible. If Kling exposes seed or variation controls, log them in a spreadsheet or asset tracker. Regenerate from the closest successful result rather than starting fresh each time.

To reduce common artifacts, simplify backgrounds when testing motion, avoid asking for legible on-screen text inside the generation, and be cautious with hands, glassware, and overlapping limbs. If realism breaks, lower scene complexity before changing the style. A solid kling 3.0 kuaishou video model guide always comes back to this principle: lock composition and subject identity first, then push cinematic flair after the base shot works.

How to Access the Kling 3.0 API and Build a Basic Workflow

Getting API access

Kling access usually follows the standard hosted-model pattern: an official platform or dashboard, possible waitlist or partner access for API usage, account verification, and credential setup for programmatic generation. Depending on product rollout stage and region, some users will see direct web access first while API access arrives through application, business inquiry, or platform-level approval. If you plan to build a workflow around it, confirm API availability before committing production timelines.

The basic setup is straightforward. Create an account on the official platform if available, review the available generation modes, then check whether API keys are issued directly in the dashboard or only after a separate developer approval flow. For team use, verify whether the account supports shared billing, role permissions, and asset history. Those details matter once multiple editors, marketers, or automation scripts touch the same pipeline.

Typical request flow for video generation

A standard API workflow usually looks like this: authenticate, submit a generation job, poll for status or receive a webhook, retrieve the completed asset, then store or route it into your content pipeline. For text-to-video, your request payload should include prompt, aspect ratio, duration, quality tier, and optional camera/style settings. For image-to-video, add a reference asset URL or uploaded file ID, plus motion instructions and strength controls if the API exposes them.

Status polling is the safe default. Submit the job, receive a job ID, then query a status endpoint every few seconds with backoff. Once the job is complete, download the returned video file and any preview thumbnails or metadata. Webhooks are better when you are generating at scale because they reduce unnecessary polling, but they require reliable endpoint handling and signature verification.

Developers usually care about four use cases: batch generation, image-to-video production, asynchronous integration, and asset routing into existing systems. Batch generation is useful for campaign testing, where one script launches ten prompt variants for the same concept. Image-to-video fits e-commerce, explainer animation, and storyboarding workflows. Asynchronous patterns matter because latency can vary from seconds to minutes depending on queue load and quality tier. Pipeline integration becomes easier if you treat Kling output as an intermediate asset, not the final deliverable.

For implementation, expect rate limits and plan retries with exponential backoff. Do not blindly resubmit the same failed request without checking whether the job actually entered the queue. Store source prompts, generation parameters, returned IDs, and output URLs in your database so you can reproduce wins later. For programmatic prompting, use template-based structures instead of freeform prose. A predictable schema like {subject} | {action} | {environment} | {camera} | {style} gives cleaner output and easier QA.

If you need to run multiple campaigns, separate prompt logic from asset management. Keep references in cloud storage, name outputs with project and version tags, and archive both successful and failed requests for later debugging. That turns a one-off experiment into a repeatable production system, which is the real point of using the API instead of clicking manually through every render.

Kling 3.0 Pricing, Credits, and Cost Planning for Real Projects

How pricing is usually structured

Kling pricing usually follows the familiar hosted AI pattern: limited free trial credits for testing, paid subscription tiers for web usage, and separate API billing or partner pricing for production-scale deployment. In some cases, higher-quality exports, longer durations, faster queue priority, or premium generation modes can consume more credits per run. That means the headline monthly plan rarely tells you the full cost until you understand how many credits each output actually burns.

When evaluating a paid tier, check whether credits refresh monthly, roll over, or expire. Also verify whether preview generations cost the same as final exports. Some platforms charge more for higher resolution, longer duration, or “pro” quality renders, and those multipliers matter quickly if your team iterates heavily. If API pricing is usage-based, ask whether billing is tied to seconds generated, jobs submitted, or a quality bucket.

How to estimate cost per video output

The easiest way to estimate spend is to model real behavior, not ideal behavior. Start with output length, then multiply by the number of attempts you realistically need. A five-second ad clip may require six to twelve runs before you land on one keeper, especially if you are refining motion and product framing. If your workflow includes both rough drafts and final renders, cost them separately. Draft runs should be your cheap exploration stage; final runs should happen only after the shot concept is validated.

For a small team, monthly spend often depends more on experimentation volume than on publication volume. If three people each test 10–20 generations per day, credits disappear much faster than expected. Add more room for retries when you need character consistency or product accuracy. Image-to-video can be cheaper overall than pure text-to-video if it reduces the number of failed exploratory renders.

Cost-saving moves are practical. First, use lower-cost drafts to narrow composition, motion, and style. Second, refine prompts using short durations before extending the shot. Third, switch to image-to-video when you already know the look you want. Fourth, lock a prompt template for recurring content types so each new generation starts from a proven base instead of zero. This is where a kling 3.0 kuaishou video model guide helps financially as much as creatively: controlled prompting saves credits.

Before buying, verify the billing details that can change the real value of the plan: commercial rights, export limits, watermark rules, queue priority, credit expiration, API overage handling, and policies for unused credits. If you are producing client work, also confirm whether team members can share assets under one workspace and whether downloaded videos include any restrictions tied to the subscription tier used at generation time.

Kling 3.0 vs Open Source AI Video Generation Models

When hosted models beat local workflows

Kling’s biggest advantage over an open source ai video generation model is not ideology, it is throughput. A hosted system gives you immediate access to a tuned stack, managed inference, and a polished front end or API. No dependency conflicts, no CUDA troubleshooting, no surprise out-of-memory errors halfway through a batch. If you need a clip today for a campaign or presentation, that difference is massive.

Quality is another reason hosted models often win. Commercial providers usually optimize inference pipelines, queue allocation, and model serving for stable visual output. In plain terms, you are more likely to get smooth motion and usable realism without hand-tuning a sampler stack or chaining extra tools. For teams that care about production speed, Kling can beat a local workflow simply because fewer things break between prompt and export.

What to know about open source alternatives

That said, open source still matters, especially if privacy, controllability, or long-term cost matters more than convenience. If you want to run ai video model locally, you gain direct control over inference settings, data handling, and deployment timing. You can test an image to video open source model, wire it into your own scripts, and avoid platform-level queues. But you pay for that freedom with GPU requirements, maintenance, and slower onboarding.

A typical local setup may demand a high-VRAM NVIDIA GPU, careful environment management, and patience with evolving repos. An open source transformer video model may look impressive in benchmarks or demo clips but still require substantial engineering before it behaves like a dependable production tool. Update cadence is another real tradeoff: open projects move fast, but breaking changes, stale documentation, and fragmented forks are common.

People researching Kling often compare it with broader discovery terms, including open source transformer video model projects and niche searches like happyhorse 1.0 ai video generation model open source transformer. Those searches usually come from the same question: should I pay for hosted generation or build around an emerging local stack? The practical answer depends on what hurts more in your workflow—credit costs or engineering overhead.

Privacy is where local models can clearly win. If your assets are sensitive, self-hosting may be the safer route. But if your team is spending more time patching inference scripts than shipping edits, the economics flip fast. Hosted models like Kling are often better for agencies, growth teams, solo creators shipping frequently, and developers who want API-based automation without becoming their own model ops team. Local models are better when you need full custody over assets, custom pipelines, or experimental control beyond what a commercial UI allows.

Best Practices, Commercial Use, and Workflow Tips for Kling 3.0 Kuaishou

Prompt and asset preparation checklist

The best repeatable workflow is simple: storyboard first, write compact prompts, use strong image references, test short clips, then expand the winners. A quick storyboard forces decisions on composition and action before you spend credits. Even a six-frame sketch or slide deck is enough. From there, each shot should have one purpose: reveal product, establish location, show character reaction, or deliver motion.

Write prompts as production instructions, not poetic essays. Include subject, environment, action, framing, lighting, and style in one clean sentence or two. If identity matters, use a reference image with clear face visibility, wardrobe, and color separation. For products, crop tightly and remove background clutter before upload. Clean source assets almost always animate better than busy ones.

Test short clips first. A three- to five-second proof clip tells you whether the subject holds together, whether the camera move feels right, and whether the scene lighting survives motion. Once one generation works, expand carefully. Change duration or intensity before you change the whole prompt. This is the safest way to scale a shot sequence inside a kling 3.0 kuaishou video model guide workflow without losing continuity.

Commercial-use questions to verify before publishing

Troubleshooting is mostly about narrowing variables. If motion is weak, simplify the action and specify a clearer camera move. If the subject drifts, reduce scene complexity and anchor appearance details more tightly. If text rendering looks wrong, add captions later in an editor instead of forcing text into the generated frames. If hands or object interactions look unnatural, cut earlier, use tighter framing, or swap to image-to-video with a stronger starting frame. If lighting shifts too much, remove extra style adjectives and state a single consistent source like “soft morning window light” or “hard studio spotlight from camera left.”

Commercial use needs a separate check every time, especially if you are deciding between hosted and self-hosted options. With Kling, confirm commercial rights in the plan you are paying for, whether outputs can be used in ads, and whether there are restrictions on logos, likenesses, or sensitive categories. With local tools, the key issue is open source ai model license commercial use. Some repos are fully permissive, some are research-only, and some mix code and weights under different terms. Never assume that because a model is downloadable, it is safe for paid client work.

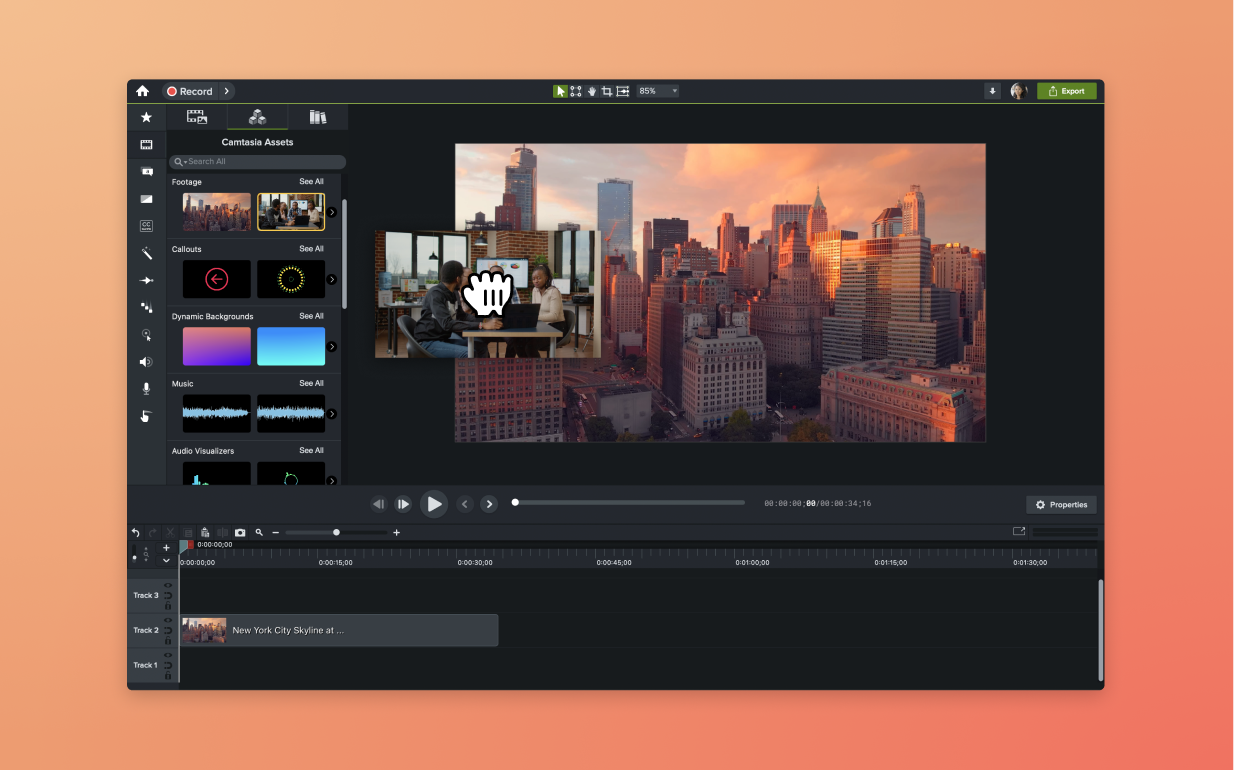

A practical stack around Kling usually includes a video editor for trimming and compositing, a captioning tool for on-screen text, an upscaler if needed, cloud storage for source and outputs, and a version tracker or spreadsheet for prompts and settings. The highest leverage habit is logging every successful shot: prompt, reference image, duration, aspect ratio, camera instruction, and what changed from the previous attempt. That turns random luck into a reusable production system.

Kling 3.0 is a strong fit when you want high-quality video generation without the drag of self-hosting. If you need fast concepting, clean image-to-video animation, or an API-ready workflow for campaign production, it can be the faster path. If you need absolute control, full privacy, or deep local customization, an open source route may still be better. The right choice comes down to four questions: do the features match your shot types, is the API ready for your pipeline, does the pricing fit your iteration volume, and do you want a hosted service or a local stack you maintain yourself?