Open-Sora: The Community Open Source Video Model Guide

If you want a practical way to understand the open sora open source video model, start with what it can do today, how to run it, and where it fits against commercial video generators. The useful baseline from the current research is clear: Open-Sora is positioned as an open-source initiative for efficient, high-quality video generation, and a reported Open-Sora 1.2 snippet says it can generate 720p HD videos up to 16 seconds from text, image, or video inputs. That gives you a realistic starting point for experiments instead of vague hype.

What Is the Open Sora Open Source Video Model?

Open-Sora in one sentence

Open-Sora is an open-source video generation initiative built to efficiently produce high-quality video while openly sharing the model, tools, and implementation details with the people actually building and testing this stuff.

That framing matters because it tells you what you’re getting right away: not just a flashy demo layer, but a project aimed at giving you access to the underlying system. The GitHub description calls it “Open-Sora: Democratizing Efficient Video Production for All,” which is the strongest verified signal in the research for how the project sees itself. If you care about reproducibility, local experimentation, inspecting workflows, or adapting pipelines, that open approach is the main draw.

What Open-Sora 1.2 can generate

The clearest capability reference available in the research is the reported Open-Sora 1.2 benchmark: 720p HD video generation up to 16 seconds. That number is useful because it sets expectations for planning prompts, testing motion, and designing short-form workflows. If you’re sketching an animatic, generating a concept teaser, or trying to validate visual direction, 16 seconds is enough to test pacing, style, and scene coherence without assuming long-form generation is already solved.

The same snippet also reports three supported input paths: text-to-video, image-to-video, and video-to-video style generation. Practically, that means you can begin from a plain prompt, steer generation from a still image, or use an existing video clip as a conditioning source for transformation or stylistic iteration. That puts Open-Sora in the same broad space as the current wave of AI video systems, but with an open-source angle rather than a purely hosted product experience.

It’s worth keeping the positioning grounded. Open-Sora is best understood as a community-driven alternative in the broader text-to-video market, not as a verified one-to-one replacement for every proprietary system. The available research does not confirm exact parity with commercial tools, and it does not provide side-by-side benchmark data for quality, consistency, speed, or controllability. What it does confirm is that Open-Sora is trying to make high-quality video generation more accessible through shared code, model access, and transparent implementation details.

That distinction is especially important if you’ve also looked at OpenAI Sora. OpenAI’s Sora is described in the research as a text-to-video generative AI model where users can start from a prompt or upload an image, with styles like cinematic, animated, photorealistic, or surreal. Open-Sora sits near that same creative territory, but the practical attraction is openness. If your workflow depends on seeing how the stack works, tracking repo activity, and testing locally, Open-Sora is immediately interesting as an open source ai video generation model rather than just another polished endpoint.

How to Use Open Sora Open Source Video Model for Text, Image, and Video Inputs

Text-to-video use cases

The first way to work with Open-Sora is prompt-only generation. This is the fastest route for concept exploration because you can test ideas without preparing source media. For early runs, keep prompts structured and specific: subject, environment, camera behavior, lighting, and style. A practical cinematic example would be: “A lone traveler crossing a foggy bridge at dawn, slow tracking shot, soft volumetric light, cinematic color grading, realistic motion.” That format gives the model anchors for scene composition and movement instead of forcing it to guess everything from a vague phrase.

For animated results, try prompts that describe shape language and motion clearly: “Stylized animated fox sprinting through a neon forest, smooth loop-like motion, saturated palette, whimsical lighting.” For photorealistic tests, lead with realism cues and camera intent. For surreal clips, stack unusual object and environment pairings while still controlling shot language. This prompt discipline matters because 720p and 16-second outputs are enough to reveal whether your scene logic works, but short enough that every token of guidance should carry weight.

Image-to-video starting points

Image-guided workflows are usually the best starting point when you want stronger visual consistency. If you already have concept art, product renders, comic panels, or still frames from a storyboard, an image-to-video open source model workflow gives you a more stable base than prompt-only generation. Instead of asking the model to invent both appearance and motion, you’re mostly asking it to preserve identity and animate from an established look.

Use image input when the style is already locked but the motion is not. That makes it useful for turning key art into short camera moves, creating scene previews from design mockups, or testing whether a static hero image can become a short promo shot. If you want iteration speed with less drift, image-guided generation is usually the smarter first pass.

Video-conditioned generation workflows

Video-input workflows are the most useful when you want transformation instead of pure invention. If you have a rough clip, previz pass, or live-action reference, video-conditioned generation can help with style transfer, look development, or visual reinterpretation. This is where Open-Sora starts to feel less like a toy and more like a creative pipeline component: you can preserve temporal structure from the input while changing mood, texture, or presentation.

A simple way to choose among the three modes is this. Use prompt-only generation for broad concept discovery. Use image-guided generation for style consistency and art direction. Use video-input workflows when timing, motion cues, or scene blocking already exist and you want a new treatment layered over them. That decision framework helps prevent wasted runs.

Keep your tests realistic. The verified reference point from the research is still 720p HD clips up to 16 seconds. So design experiments that fit that envelope: a single scene, one dominant action, one clear style target. If you overload the request with too many shot changes or multiple unrelated actions, you make evaluation harder. Start with one goal per generation: prove cinematic mood, prove character motion, prove style transfer, or prove subject consistency. That is the fastest way to learn whether the open sora open source video model fits your actual workflow instead of just looking interesting on paper.

Open Sora Open Source Video Model Setup: What to Expect Before Installing

Why local setup is more technical than cloud tools

The biggest practical difference between Open-Sora and hosted video generators is setup friction. One tutorial source in the research says it plainly: “This is not an easy install and carries many ways to go wrong.” That single warning is probably the most useful installation fact available because it sets the right expectations. If you’re used to cloud tools where you log in, type a prompt, and render, Open-Sora will feel much closer to a developer deployment than a consumer creative app.

That usually means dealing with repositories, model files, environment setup, command-line steps, and troubleshooting dependencies before you ever render a clip. Even if a tutorial claims you can get it running within the hour, you should read that as “possible with luck and experience,” not guaranteed. The practical move is to assume the first attempt may fail and to budget time for fixes.

A realistic pre-install checklist

Before doing anything else, go straight to the official GitHub repository. That should be your source of truth for model files, installation instructions, updates, release notes, and issue discussions. If the repo has changed since the last video tutorial or blog post you found, the repo wins. Check whether there are recent commits, active issues, updated setup steps, and any notes on model versions like Open-Sora 1.2.

A solid readiness checklist looks like this:

- You’re comfortable in the command line.

- You know how to manage environments and dependencies.

- You have enough local compute to attempt video model inference.

- You’re willing to troubleshoot installation failures.

- You can spare time for small validation runs before real projects.

That last point matters. Don’t make your first test a mission-critical deliverable. Make it a simple benchmark clip that proves the install works.

There are also some important unknowns in the current research. Verified hardware minimums, VRAM requirements, dependency specifics, and OS prerequisites are not confirmed in the provided material. So if you’re trying to run ai video model locally, do not assume the machine that handled a smaller image model will automatically handle this. Check the repository for exact requirements before deployment, and verify whether model weights, inference scripts, and acceleration options have changed.

If your real goal is production speed this week, a commercial tool may still get you there faster. But if your goal is control, inspectability, and learning how an open source transformer video model behaves under your own conditions, the setup effort can be worth it. Just go in with a developer mindset: verify the repo, isolate the environment, run the smallest test first, and treat every dependency warning as something to resolve before scaling up.

Open Sora Open Source Video Model vs OpenAI Sora, Runway, and Pika

When Open-Sora makes more sense

Open-Sora makes the most sense when open access is part of the requirement, not just a nice bonus. If you want to inspect how the workflow is assembled, run tests locally, experiment with implementation details, or build internal processes around an open source ai video generation model, Open-Sora offers something proprietary systems generally do not: visibility into the stack itself. The verified framing from the research supports that directly—Open-Sora is an open-source initiative focused on efficient, high-quality video generation and sharing the model, tools, and details with the broader builder ecosystem.

Against OpenAI Sora, the high-level contrast is straightforward. Both belong to the text-to-video space, but OpenAI Sora is presented as a proprietary product experience, while Open-Sora is framed around open-source access. The research confirms OpenAI Sora supports prompt-led creation and image uploads. For Open-Sora 1.2, the reported support extends to text, image, and video inputs, with a cited output reference of 720p HD clips up to 16 seconds. If your workflow depends on testing all three input modes in an open environment, that’s a real point in Open-Sora’s favor.

When commercial tools may be faster

Commercial tools may be the better move when speed of onboarding matters more than local control. Runway and Pika are regularly treated as AI video heavyweights in the broader market context, and that label matters for practical reasons: polished UI, less setup friction, and a faster path from prompt to result. If you need to hand a workflow to a non-technical teammate tomorrow, convenience can outweigh openness.

The current research does not support claiming side-by-side quality wins, better motion, lower cost, or stronger reliability for any specific platform in this comparison. There are no verified benchmark tables, no confirmed pricing analysis, and no exact feature parity map in the provided sources. So the honest comparison is about operating model, not scorekeeping. Open-Sora appears promising for experimentation and technical workflows. Commercial platforms appear stronger for immediate accessibility and lower setup burden.

A useful way to frame the decision is this: choose Open-Sora when you care about controllability, repo-level transparency, and the ability to shape your own video generation environment. Choose a commercial tool when you care more about a finished product experience, faster first results, and fewer installation headaches. If you’re also comparing adjacent projects like the happyhorse 1.0 ai video generation model open source transformer, the same rule applies: the more you value open infrastructure and custom experimentation, the more these open systems become worth the effort.

Best Practical Use Cases for the Open Sora Open Source Video Model

Prototype content quickly

Open-Sora is a strong fit for rapid concept videos where you need to test an idea before committing to full production. The reported 16-second clip length is perfect for pitch visuals, mood snippets, opening shots, and short narrative beats. If you’re trying to validate whether a sci-fi hallway should feel sterile or dreamlike, whether a character entrance needs a slow push-in or handheld energy, or whether a product teaser should lean sleek versus surreal, short-form generation is enough to make the decision.

That makes the model useful for visual storyboarding too. Instead of relying only on static frames, you can test movement, timing, and transitions in a compact format. For previsualization, that’s often more valuable than chasing long outputs.

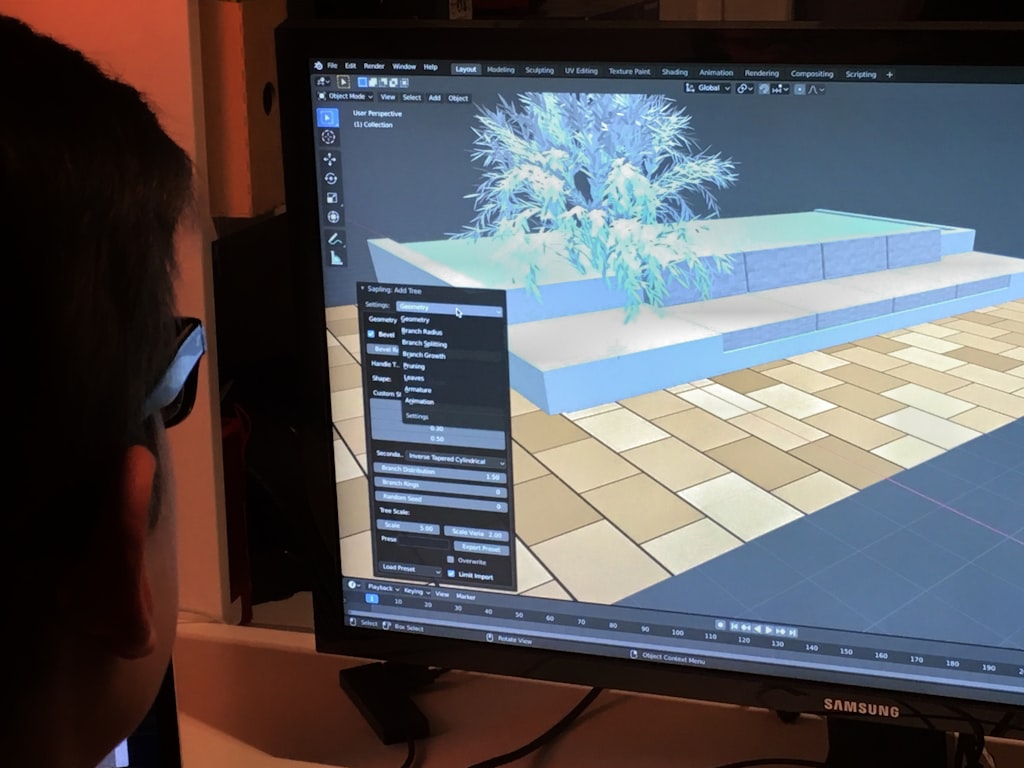

Test local AI video workflows

If your goal is to run ai video model locally, Open-Sora becomes useful beyond the clip itself. It gives you a way to test environment setup, media preprocessing, prompt strategies, and evaluation methods around an open source transformer video model. You can use it to see how your machine behaves, how long iterations take, and where your bottlenecks appear before building anything larger.

This is also where adjacent search intent lines up naturally: open source ai video generation model, image to video open source model, and run ai video model locally all point to the same practical need—control over the pipeline. If you’re trying to create repeatable internal workflows instead of depending entirely on a black-box hosted service, Open-Sora gives you something concrete to evaluate.

Build around an open-source video model

Developers and technical teams usually get the most value from Open-Sora when they need transparency and reproducibility. Open platforms can make it easier to inspect model behavior, document exact conditions for a generation run, and test process changes over time. That doesn’t automatically make them production-ready, but it does make them better for R&D and prototyping workflows where understanding the system matters.

There’s also a licensing question people often need to resolve early: open source ai model license commercial use. The current research confirms the project is open source, but you should still verify the exact license terms in the repository before using outputs or tooling in any commercial setting. Don’t assume every open model has the same rights profile.

A quick decision framework helps here. Choose Open-Sora if you need local control, model transparency, or workflow reproducibility. Reach for a hosted generator if your priority is simple output speed with minimal setup. If your project lives in concept testing, short demo clips, style experiments, or internal tooling validation, Open-Sora is already in a useful zone. If your project needs guaranteed support, clear integration docs, and frictionless onboarding, the current research doesn’t verify that level of maturity yet.

How to Evaluate Open Sora Open Source Video Model for Your Workflow

Questions to answer before adoption

The fastest way to evaluate Open-Sora is to match its confirmed capabilities against your actual workflow requirements. Start with input type support. Do you need pure prompt-based generation, image guidance, or video-conditioned transformation? The current research reports that Open-Sora 1.2 supports all three, which is a solid starting point if your workflow spans concept generation, style transfer, and iterative refinement.

Next, check your output expectations. The verified benchmark in the available material is 720p HD generation up to 16 seconds. If your project only needs short prototypes, motion tests, or stylized proof-of-concepts, that may be enough. If you need longer sequences, guaranteed continuity across multiple scenes, or polished delivery assets, you should treat those as unverified until you confirm them yourself.

Then ask the hard setup question: how much local installation friction are you willing to tolerate? One source explicitly warns that installation is not easy and has many ways to fail. That means setup tolerance is not a side issue; it’s a core adoption criterion. If your team wants results fast and has no appetite for dependency debugging, you may want to benchmark Open-Sora against a commercial baseline before spending too much time getting it running.

Finally, decide whether open-source access actually matters for the project. If you need visibility into how the system works, want to test reproducible pipelines, or plan to build around the tooling, Open-Sora becomes far more compelling.

A simple evaluation checklist

Use this checklist before committing serious time:

- Check the latest GitHub repository activity.

- Read the current installation docs, not just third-party tutorials.

- Review release notes for the latest model version.

- Scan the issue tracker for unresolved setup problems and responsiveness.

- Confirm the license if commercial use is relevant.

- Define one test generation goal before installing.

- Compare that test against a commercial tool baseline.

Those are your best maturity signals from the currently available research. Repo activity, documentation quality, release notes, and issue tracker health tell you more about practical usability than broad marketing language ever will.

There are also meaningful evidence gaps you should plan around. The research does not confirm API details, integration documentation, enterprise support signals, or benchmark data for reliability, throughput, and consistency. That means you should not assume easy automation, product-grade support, or smooth scale-up unless the repository now shows those details clearly.

The lowest-risk path is simple: pick one narrow experiment. Maybe that’s generating a 10- to 16-second cinematic text prompt, animating one piece of key art, or transforming one short reference clip. First confirm install stability. Then verify that the output quality meets your bar. Then compare the result to a commercial option like Runway or Pika on the same creative task. If Open-Sora gives you enough control or transparency to justify the extra setup effort, keep going. If not, you’ve learned that cheaply and early.

Open-Sora is the right choice when openness is part of the workflow requirement, not just a curiosity. If you want a system you can inspect, test locally, and shape around your own process, the open sora open source video model is already worth a serious trial. If you want instant onboarding and a more polished path to finished clips, commercial tools may still be the faster route. The best choice comes down to three things: how much you value openness, how much setup effort you can absorb, and whether your video workflow is centered on experimentation, consistency, or speed.