Open Source vs Proprietary AI Video Models: The 2026 Landscape

In 2026, choosing an AI video model is no longer just about output quality—it is a practical decision about cost, control, licensing, deployment, and how fast you can ship useful video workflows.

Open Source vs Proprietary AI Video Model in 2026: What Has Actually Changed

Why open-source AI video deployment is easier now

The biggest shift this year is not that open models suddenly became magical. It is that deploying them stopped being a specialist-only project. Tools like Hugging Face, LangChain, Ollama, and LM Studio have lowered the barrier so much that a small team can now stand up a usable video workflow without hiring a dedicated ML engineer first. That matters because a lot of real video work is repetitive: product clips, ad variants, social edits, image-to-video experiments, branded motion templates, and internal creative automation. Those workflows benefit more from control and repeatability than from chasing a single benchmark win.

Hugging Face has become the practical starting point for discovering, testing, and packaging open models. Ollama and LM Studio made local model management feel much closer to installing software than building infrastructure from scratch. LangChain helps when the video model is only one step in a larger pipeline that includes prompts, asset retrieval, review loops, or automated publishing. The result is simple: the old assumption that an open source ai video generation model is only for researchers no longer holds for many production use cases.

This is especially noticeable for teams that want to run an image to video open source model in a private environment, connect generation to internal asset libraries, or build repeatable brand-safe outputs. If you are creating the same type of short-form video every week, open deployment now looks less like a moonshot and more like an ops choice. You still need hardware planning and workflow discipline, but the setup burden is lower than it was even a year ago.

Why proprietary platforms still lead in convenience

That said, proprietary platforms still win the convenience battle in a lot of day-to-day work. Managed infrastructure means no GPU provisioning, no driver issues, no queue tuning, no model packaging, and no patching. You log in, generate, edit, export, and move on. For a marketing team trying to launch a campaign by Friday, that convenience is not a luxury. It is the deciding factor.

This is why the real 2026 framing is not quality versus cost. It is flexibility versus convenience. Proprietary AI video platforms still tend to offer smoother onboarding, bundled editing tools, asset management, templates, collaboration layers, and support. Open-source stacks now compete far better than before, but they ask you to make more decisions about deployment, maintenance, and legal review.

The perception gap is also still real. Many buyers continue to assume proprietary vendors are stronger because they train on larger datasets and operate with more infrastructure and capital. In some cases that is true, especially around reliability and polished output under deadline pressure. But the gap is narrowing fast, and for teams that care about control, privacy, and workflow fit, the open source vs proprietary ai video model decision now looks far more balanced than it did in earlier cycles.

How to Compare an Open Source vs Proprietary AI Video Model for Real Work

The 6 buying criteria that matter most

The fastest way to compare tools is to stop asking which model is “best” and start asking which one survives your actual workflow. Six criteria matter most in real buying decisions: output quality, reliability, tooling, legal risk, deployment flexibility, and long-term cost. I also recommend treating customization as a sub-score inside flexibility, because that is where open stacks often create their biggest advantage.

Quality still matters, but not in isolation. A model that creates beautiful clips and crashes under load is a bad production choice. Reliability means uptime, render consistency, queue stability, and whether the platform behaves predictably during a campaign push. Tooling includes editors, prompt controls, templates, integrations, versioning, and collaboration. Legal risk covers license terms, commercial rights, training data concerns, customer contract compatibility, and indemnity. Deployment flexibility asks whether you can self-host, run privately, integrate with internal systems, or run ai video model locally. Long-term cost includes API spend, infrastructure, storage, labor, maintenance, and switching cost.

Proprietary models are still often perceived as stronger because of larger datasets, infrastructure, and investment. That perception is not baseless. Managed platforms usually have more polished output and smoother scaling. But open-source is narrowing the gap, especially for repeatable formats where custom tuning and workflow control matter more than raw wow factor.

A simple scorecard for teams and solo creators

Use a weighted scorecard instead of gut feel. For marketing campaigns, weight speed, reliability, editing workflow, and legal clarity more heavily. For product demos, weight consistency, brand control, and integration with existing assets. For social content, weight turnaround time and cost per variation. For animation, weight character consistency and prompt control. For internal creative pipelines, weight deployment flexibility and total cost of ownership.

A simple scorecard can look like this:

- Quality: 20%

- Reliability: 20%

- Tooling and UX: 15%

- Legal and licensing fit: 15%

- Deployment flexibility and customization: 20%

- Long-term cost: 10%

If you are a solo creator, you may shift more weight to cost and ease of use. If you are an agency, move more weight to reliability and support. If you are building an internal video engine, move more weight to flexibility, privacy, and lock-in risk.

Before choosing between a hosted API and an open-weight stack, run this checklist:

- What exact video types are you producing: product videos, UGC ads, animated clips, image-to-video, or explainers?

- Do you need self-hosting, private deployment, or local inference?

- How often will you generate at scale?

- Do you need recurring brand style or character consistency?

- Are commercial terms clear enough for client delivery?

- Can your team maintain GPUs, updates, and integrations?

- What is your acceptable render latency?

- What happens if the vendor changes pricing or terms?

That checklist turns the open source vs proprietary ai video model choice into an operational decision instead of a vague preference debate.

When an Open Source AI Video Generation Model Is the Better Choice

Best-fit use cases for open models

Open source is often the better route when you know your workflow will repeat and you want ownership over how it evolves. If you are building weekly product clips, localized ad variants, training videos, template-based explainers, or branded social content, an open source ai video generation model can deliver lower long-term cost and much tighter control over outputs. The value grows over time because you are not just generating videos. You are building a reusable system.

Customization is the strongest reason to go open. Research consistently points out that both open and closed models can be fine-tuned, but open-source offers deeper flexibility. In practice, that means you can tune for recurring brand style, visual pacing, composition rules, character appearance, title-card behavior, or standard transitions. If your team needs the same spokesperson style, mascot, scene framing, or product showcase structure every week, open models make that much easier to formalize.

This is also where related search intent fits naturally. If you are comparing an open source transformer video model, testing an image to video open source model, or digging into niche queries like happyhorse 1.0 ai video generation model open source transformer, you are probably looking for control, inspectability, and the ability to adapt the stack to a specific creative process. That is exactly where open systems are strongest. They are less appealing when you want a perfect out-of-the-box interface and more appealing when you want a modifiable engine.

How to run AI video model locally or in a private stack

If you want to run ai video model locally or in a private environment, validate four things before you commit. First, hardware. Video generation is far more demanding than text generation, so GPU memory, storage throughput, and batch planning matter immediately. Second, latency. A local workflow may protect privacy and cut API spend, but generation can still be too slow for fast-turn campaign work if the hardware is undersized. Third, maintenance effort. Someone must handle version updates, dependencies, monitoring, and failure recovery. Fourth, integration requirements. If your workflow depends on DAM systems, approval tools, CMS publishing, or asset libraries, the model is only one part of the stack.

A practical self-hosting checklist looks like this:

- Confirm GPU availability and budget

- Estimate output volume per week

- Define acceptable render time per clip

- Test prompt consistency across repeated briefs

- Verify support for image-to-video, style control, and fine-tuning

- Plan storage for source assets and outputs

- Build logging for failed jobs and reruns

- Review licenses before commercial deployment

Open source works best when privacy, deployment control, and long-term leverage matter more than instant simplicity. If you are willing to invest in setup, the payoff is a video workflow that belongs to you.

When a Proprietary AI Video Model Makes More Sense

Best-fit scenarios for managed platforms

Proprietary AI video models are usually the strongest choice when the job is simple: produce good work quickly, with minimal setup, and hit a deadline. If your team values speed to market, predictable uptime, vendor support, and a polished interface, managed platforms are hard to beat. This is especially true for agencies juggling client revisions, in-house marketers shipping paid campaigns, and creators who need reliable output more than technical flexibility.

The practical win is reduced operational drag. You avoid infrastructure work, patching, hosting, model packaging, and integration complexity. A hosted tool with templates, built-in editing, brand kits, and export presets can compress an entire workflow into a few clicks. For many teams, that alone justifies the higher price. The expensive option is often the cheaper option once internal technical time is counted honestly.

Secondary-source tool roundups also show why proprietary tools remain attractive for specific output types. Dreamina is noted for strong product videos and animated characters, which makes it relevant when you need visually polished showcase content or stylized character-led creative. Tagshop AI is frequently recommended for UGC-style ads and realistic AI ad workflows, especially in social and e-commerce contexts where believable creator-style content matters. These are not independent benchmark results, so they should be tested rather than accepted blindly, but they are useful directional signals.

How to justify the higher price with faster execution

The smartest way to justify a proprietary platform is to calculate execution speed, not subscription cost alone. Ask how many hours your team saves on setup, retries, editing, collaboration, and support. If a managed platform cuts production time in half and removes technical maintenance, the higher monthly bill can be the rational choice.

A good fit usually looks like this:

- Campaign deadlines are tight

- Team members are not ML operators

- Reliability matters more than deep customization

- Video output must be client-ready quickly

- Internal engineering bandwidth is limited

- Legal review is easier through one vendor contract than through multiple open licenses

The open source vs proprietary ai video model decision often swings toward proprietary when the creative brief is straightforward and the organization cannot afford infrastructure friction. If you need campaign assets this week, not a customizable stack next quarter, hosted platforms are often the better tool.

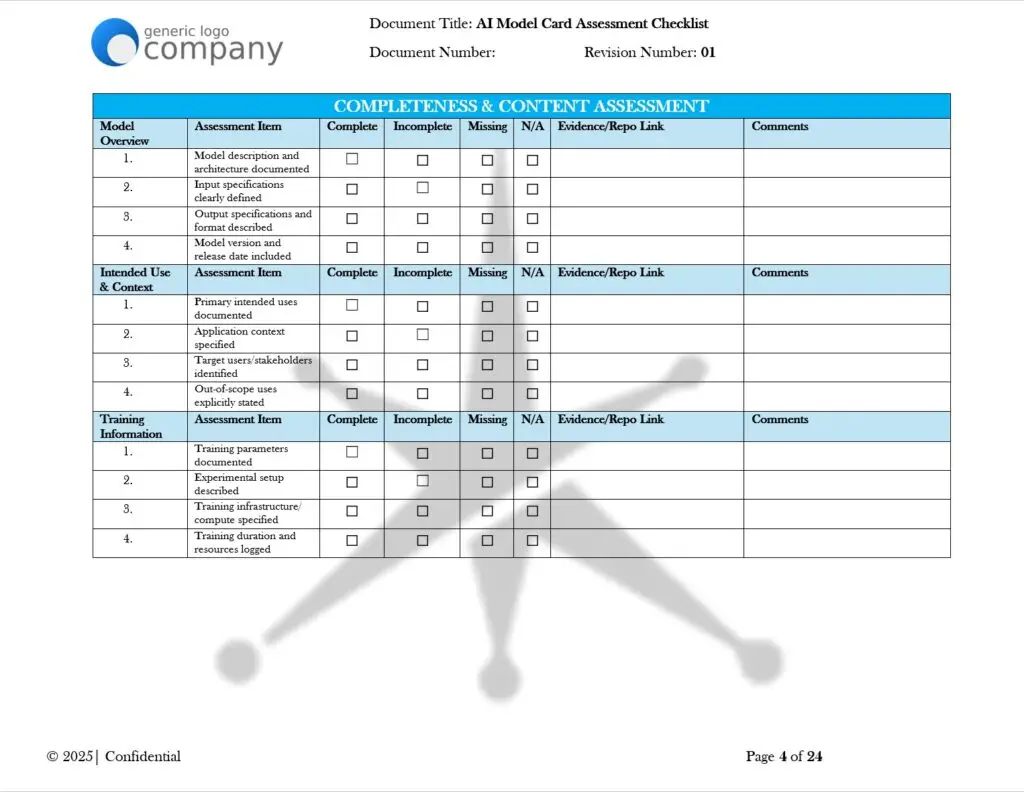

Open Source AI Model License Commercial Use: What to Check Before You Publish Video at Scale

Licenses that appear often in AI model releases

Licensing is where a lot of otherwise smart video teams get sloppy, and that is risky. For commercial work, license terms and IP exposure can matter more than benchmark quality. A model that performs beautifully is still a bad choice if the license, training data terms, or platform contract creates uncertainty around commercial use. That is why open source ai model license commercial use questions should be part of the first review, not the last one.

The license families you will encounter most often are MIT, Apache 2.0, GPL/LGPL, and custom modified AI licenses. MIT is usually the most permissive and simple, which makes it attractive for commercial experimentation. Apache 2.0 is also business-friendly, with clearer patent-related terms that many companies prefer. GPL and some LGPL scenarios can trigger obligations around redistribution or linked components, so they need closer review before you embed anything in a commercial product or customer-facing workflow. Custom AI licenses are where you have to slow down. Some look open at a glance but restrict commercial use, redistribution, model serving, or derivative deployment.

With AI video, you also need to separate three legal layers: the model license, the training data terms if disclosed, and the platform or hosting contract. A permissive model license does not automatically remove risk if the dataset provenance is unclear or if the hosted service contract limits your output usage. This is the kind of issue that shows up late, right when a customer asks for IP assurances.

A commercial-use review checklist for video teams

Before publishing videos at scale, run a formal review checklist:

- Does the model license explicitly allow commercial use?

- Are there restrictions on redistribution, serving, or derivative deployment?

- Is attribution required in product, documentation, or output?

- Are there training data provenance claims, disclaimers, or known gaps?

- Does the vendor offer indemnity, or are you carrying all risk?

- Do customer contracts require stronger IP assurances than the model provides?

- Are there content restrictions that affect ad, political, healthcare, or financial use?

- Is the license compatible with your deployment method, especially if you fine-tune or host privately?

- If you are combining components, are all licenses compatible with one another?

For open models, download and archive the exact license version used at deployment time. For hosted tools, save the terms of service and enterprise agreement that apply when content is produced. If your legal team asks later, “What rights did we have when this campaign shipped?” you want a clear answer.

This is one area where the open source vs proprietary ai video model decision can reverse fast. Open can be excellent when rights are clear. Proprietary can be safer when the vendor offers cleaner contracts and support. But neither side is automatically safe. You have to verify.

Best 2026 Decision Framework: Choose the Right Open Source vs Proprietary AI Video Model

Recommended paths for startups, agencies, and in-house teams

The clearest way to decide is by workflow type. Solo creators usually do best with whichever option gets publishable output fastest at a sustainable cost. That often means starting proprietary, then moving parts of the process to open tools later if volume increases. Startups building productized video features should lean toward open or hybrid stacks early, because deployment control, customization, and margin matter more over time. Agencies typically benefit from proprietary platforms first, since uptime, support, and client-ready polish beat infrastructure ownership during deadline-heavy work. E-commerce brands often split the difference: use proprietary tools for rapid UGC-style ad production and test open systems for repeatable product video pipelines. Enterprise teams should decide based on privacy requirements, procurement constraints, and legal review capacity; many will end up hybrid by default.

The strongest practical pattern in 2026 is not ideological. It is selective. Use managed tools where speed wins. Use open systems where recurring workflow leverage wins. That mindset keeps you from overpaying for convenience everywhere or overengineering customization where it is not needed.

A 30-day pilot plan to test before committing

Run a 30-day pilot with one proprietary tool and one open-source stack on the exact same brief. Do not compare them on abstract prompts. Compare them on work you actually need: product videos, UGC ads, animated character clips, image-to-video workflows, and branded short-form social content.

Week 1: define the brief, success criteria, and legal requirements. Choose one managed platform and one open stack with enough documentation to deploy quickly.

Week 2: produce the first batch of outputs. Measure quality, prompt consistency, render time, editing control, and failure rate.

Week 3: test revisions. Ask both systems to make the same changes: new product angle, alternate CTA, different visual pacing, character consistency, or tighter brand alignment.

Week 4: score both options using the same framework: quality, editing control, turnaround time, reliability, legal fit, deployment flexibility, and total operating cost.

By the end of the pilot, the shortlist usually becomes obvious. Choose proprietary when speed, support, and managed simplicity are the top priorities. Choose open-source when control, customization, privacy, and long-term leverage matter more. If neither fully wins, keep a hybrid stack and assign each tool to the job it handles best.

Conclusion

The best choice is the one that matches how your team actually makes video, not the one with the loudest hype cycle. If speed, polished UX, predictable uptime, and low operational burden matter most, proprietary platforms are usually worth the premium. If customization, privacy, deployment control, and long-term cost matter more, open systems are increasingly practical in 2026.

For most teams, the smartest move is to compare one hosted tool against one self-managed option on a real brief, then score the result honestly. Look at output quality, yes, but also look at editing control, turnaround speed, license clarity, privacy, and what it will cost to keep shipping every week. That is how you choose the right model with confidence: by optimizing for your workflow priorities over time, not just for the most impressive demo clip today.