RunPod vs Lambda for AI Video Generation: Which GPU Cloud Fits Your Workflow?

If you want faster, cheaper AI video generation in the cloud, the real choice between RunPod and Lambda comes down to your workflow: serverless experimentation or steady, reliable GPU time. That distinction matters a lot once you start pushing real video jobs instead of just generating a couple of stills. A quick AnimateDiff test, a ComfyUI demo endpoint, an overnight batch render, and a repeatable image-to-video pipeline all stress cloud GPUs in different ways.

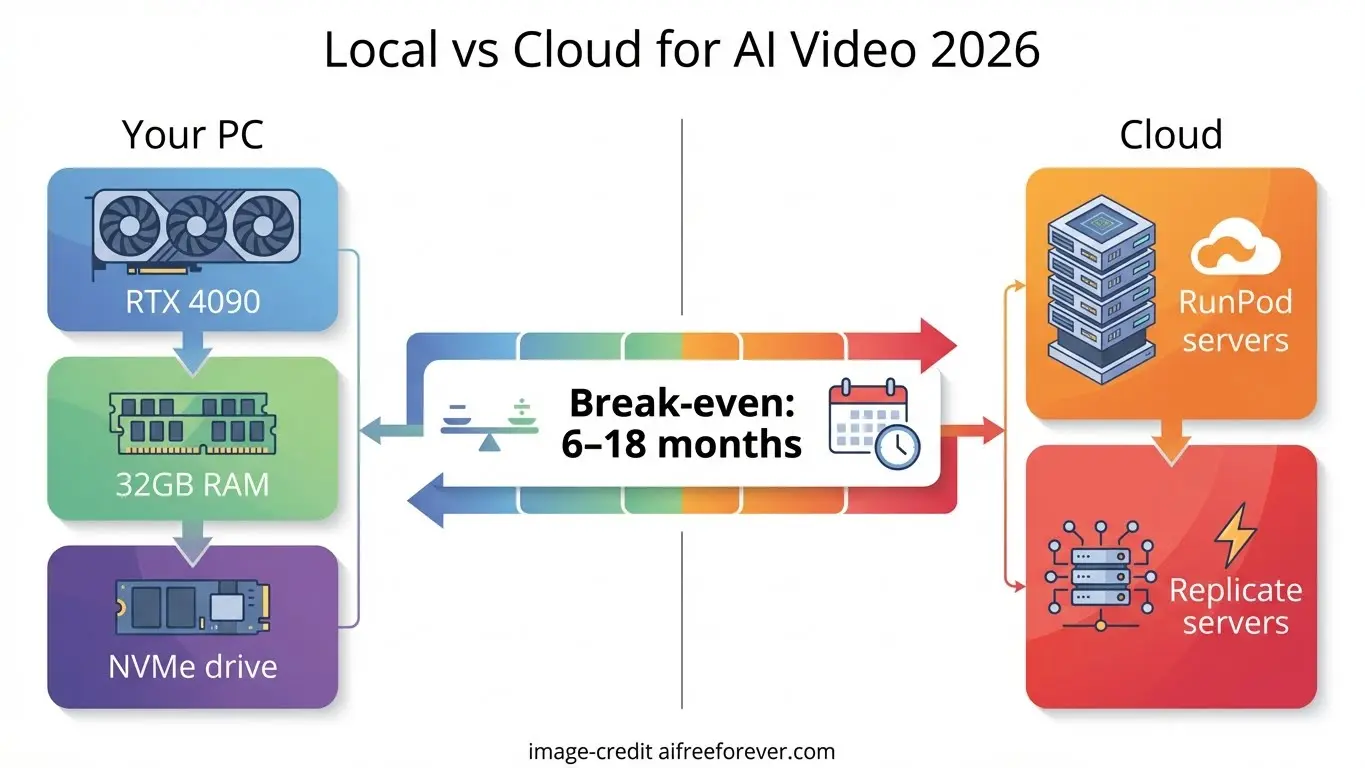

For most of us building with open models, the hard part is not just finding a GPU. It is finding the billing model, deployment style, and reliability level that matches how we actually work. If you are testing an open source ai video generation model, wiring up node graphs in ComfyUI, or deciding whether to run ai video model locally versus renting cloud GPUs, the better platform is the one that wastes the least time and money between prompts.

RunPod vs Lambda AI Video: Quick Answer for Different Use Cases

Choose RunPod for bursty experiments and ComfyUI deployments

RunPod is the better fit when your AI video workflow is irregular, experimental, or deployment-heavy. Its core appeal is flexibility: container-based Pods for hands-on sessions and serverless GPU functions for inference-style workloads. Research comparisons consistently frame RunPod as the more flexible developer platform, and Northflank’s 13 Aug 2025 comparison even highlights RunPod for serverless AI workflows with zero cold starts. That matters when you are spinning up ComfyUI, testing a workflow, then shutting everything down again.

If your pattern looks like this—launch a GPU, test AnimateDiff settings, tweak motion LoRAs, compare schedulers, export a few clips, stop the machine—RunPod usually feels more natural. It is especially attractive if you want to expose a ComfyUI workflow as a lightweight demo or build a small internal tool around video generation. There are already tutorial resources specifically focused on hosting ComfyUI video generation on RunPod Serverless Endpoints, which is a strong practical signal that people are using it exactly this way.

Choose Lambda for steadier long-running GPU sessions

Lambda fits better when you need a more traditional rented GPU box and want fewer surprises. Research notes describe Lambda Labs as closer to standard cloud GPU instances rather than a serverless-first platform, and Northflank frames it as reliable compute backed by a decade-long AI focus. That positioning lines up well with longer sessions where interruption costs more than a slightly less flexible setup.

A fast decision framework helps. If your work is sporadic testing, demos, prompt iteration, or scale-to-zero usage, lean RunPod. If your work is continuous rendering, long image sequence generation, training, or all-night runs, lean Lambda. Community discussion also often groups RunPod and Lambda together as two of the cheaper GPU options for training or fine-tuning compared with larger clouds, so this is less about “cheap versus expensive” and more about how you pay and how you work.

One more thing changes the answer: what you mean by AI video generation. AnimateDiff is text-to-video, while Stable Video Diffusion is image-to-video. SVD is not controlled through text, which makes its workflow very different. If you are doing exploratory text-driven prompting, RunPod’s flexible ComfyUI workflows can be a great match. If you are executing a repeatable image-to-video batch with stable settings and long runtimes, Lambda’s steadier instance model may be the cleaner choice. That is the heart of runpod vs lambda ai video: flexibility versus dependable session time.

RunPod vs Lambda AI Video Pricing: What You Actually Pay For

Per-second vs per-minute billing

The biggest pricing difference is simple but easy to misuse. RunPod bills Pods per second, while Lambda charges per minute. Per-second billing sounds automatically cheaper, but it only helps if you are disciplined about stopping resources. Research notes make this explicit: RunPod Pods still cost money while idle unless you are using serverless, which can scale to zero. That one detail decides whether RunPod saves you money or quietly leaks budget all week.

For short sessions, per-second billing is excellent. If you spend 12 minutes loading a ComfyUI graph, testing two prompts, and exporting one clip, RunPod’s billing granularity is friendlier than paying for broad chunks of time. If your job pattern is dozens of short tests throughout the week, that efficiency adds up. It is one reason runpod vs lambda ai video keeps coming up among people running experiments rather than continuous jobs.

Lambda’s per-minute billing is easier to budget once a session is already long. If you are going to keep a machine alive for several hours, minute-level billing becomes less important than overall hourly economics and machine stability. In practice, that means Lambda is often less mentally taxing for overnight runs, long training sessions, and multi-hour rendering blocks.

When serverless scale-to-zero saves money

The real money saver on RunPod is not just per-second Pods. It is serverless scale-to-zero. If you deploy a ComfyUI-backed inference workflow or an internal endpoint that only gets hit occasionally, scale-to-zero prevents paying for idle GPU time between requests. That is a very different financial model from leaving a standard instance running “just in case.”

Here is a practical way to estimate cost by workflow:

- Short ComfyUI tests: RunPod wins if you start, test, and stop quickly. Even better if the workflow can be wrapped in serverless inference.

- Overnight renders: Lambda is easier to predict because the machine is intended to stay on continuously, and reliability matters more than shaving tiny billing increments.

- Repeated prompt iteration across the day: RunPod can be cheaper if sessions are bursty and you consistently tear down Pods or rely on serverless.

- Daily full-shift generation: Lambda often becomes easier to budget because your usage resembles a normal rented workstation.

Community discussion repeatedly treats both RunPod and Lambda as relatively cheap options for GPU-heavy work. One Reddit comparison on a large PyTorch dataset even described RunPod and Lambda Labs as the only two cheap options in that context. That does not mean they cost the same in practice. It means both sit in the value tier compared with bigger cloud providers.

A useful rule: if your GPU sits idle between creative decisions, RunPod serverless can save real money. If your GPU stays busy for hours at a time, Lambda’s traditional session model is often simpler. Before renting anything, map your week honestly: count how many sessions are under 30 minutes, how many are multi-hour, and how often you forget to shut machines down. That answer is more valuable than any generic pricing page.

Best Platform Setup for AI Video Generation Workflows

Text-to-video vs image-to-video matters

A lot of bad cloud decisions start before the GPU is rented. They start when “AI video generation” is treated like one workload. It is not. AnimateDiff is text-to-video. Stable Video Diffusion, or SVD, is image-to-video. Most importantly, SVD is not controlled through text. That means your workflow design, prompt loop, checkpoint selection, and runtime behavior can differ a lot depending on the model family.

If you are using AnimateDiff, you will probably spend more time iterating prompts, motion settings, model combinations, and node layouts. That makes a flexible environment more valuable. If you are using an image to video open source model like SVD, your process may be more pipeline-driven: prepare source frames, set motion parameters, run generations, review consistency, repeat. That often favors a stable long-running session.

This distinction matters beyond these two tools. If you are testing a happyhorse 1.0 ai video generation model open source transformer, another open source transformer video model, or a broader open source ai video generation model family, always confirm whether the control mode is text-first, image-first, or hybrid. That single detail determines whether your time will be spent in fast prompt loops or in structured batch jobs.

ComfyUI workflows and deployment options

ComfyUI is usually the stronger choice when you want configurable AI video workflows. Research notes repeatedly point to ComfyUI as more configurable, while AUTOMATIC1111 is often easier to set up. For video, that configurability matters because you are chaining motion modules, conditioning, frame interpolation, upscaling, control passes, and export logic that simpler interfaces often hide or limit.

That is where RunPod becomes especially attractive. There are specific tutorial resources for ComfyUI video workflows on RunPod Serverless Endpoints and broader RunPod ComfyUI setups, so the path is well worn. If your goal is to build a reusable node-based workflow, expose it as a service, or rapidly switch containers and environments, RunPod’s deployment style is a strong match.

Lambda can still run ComfyUI perfectly well, but the reason to choose it is different. You choose Lambda when the interface is settled and the job needs dependable uninterrupted compute. For example, once you have finalized a workflow for turning product stills into short motion clips using an image to video open source model, Lambda makes sense as the execution layer for longer sessions.

A good structure by use case:

- Generating clips for exploration: RunPod Pod or serverless-backed ComfyUI workflow.

- Refining prompts and node graphs: RunPod, because fast startup and deployment flexibility matter more than long-session certainty.

- Building repeatable image-to-video pipelines: Lambda if the process is stable and runs for long blocks.

- Testing whether to run ai video model locally or in cloud: Start on RunPod for cheap iteration, then move to Lambda if the workload proves continuous.

If licensing enters the picture, especially for client work, check each model’s terms before scaling. An open source ai model license commercial use question is separate from cloud choice, but it affects whether your polished pipeline is actually usable for paid output. The smart sequence is model type first, license second, platform third.

RunPod vs Lambda AI Video Performance and Reliability

Where flexibility wins

RunPod’s advantage is not raw mythology about speed. It is the ability to shape the environment around the job. Container-based Pods make it easier to customize dependencies, and serverless GPU functions open up efficient inference patterns that are awkward on more traditional GPU rentals. When you are testing several video stacks, comparing forks, or deploying a custom ComfyUI graph, that flexibility can save hours of setup friction.

That is especially useful for rapid iteration. AI video jobs are rarely one-and-done. You load checkpoints, warm caches, regenerate clips, adjust motion strength, rerun frame windows, and swap graph components. A platform that lets you package exactly what you need and turn it off quickly has real practical value. For occasional use, RunPod’s flexibility can also reduce wasted spend because you do not need to keep a GPU parked all day.

Where reliability matters more than features

The tradeoff is reliability. Research notes indicate that established providers are often viewed as having fewer reliability issues than platforms like RunPod, and Northflank explicitly frames Lambda Labs as the stronger option for reliable compute. That aligns with the broader market view of Lambda as a more traditional, steadier GPU cloud experience.

For AI video generation, reliability is not an abstract quality metric. It directly affects long renders, queued jobs, checkpoint loading, repeated frame generation, and any pipeline where interruption means wasted time. If a session drops halfway through a long clip batch, you lose more than compute minutes. You lose momentum, file state, and often the exact setup that produced the best result.

Lambda’s appeal is strongest when deadlines exist. If you are rendering client deliverables, producing a batch overnight, or running a workflow that takes a long time to initialize before actual generation starts, dependable session continuity matters more than having the fanciest deployment model. The less often you can tolerate restarting, the more attractive Lambda becomes.

That does not make RunPod unreliable by default. It means you should match the platform to your interruption tolerance. If the job is exploratory and can be restarted cheaply, RunPod’s flexibility often wins. If the job must finish without drama, Lambda is often the safer path.

A simple practical filter works well:

- Low tolerance for interruptions: choose Lambda.

- Need production-ready consistency: choose Lambda.

- High experimentation, low always-on usage: choose RunPod.

- Need serverless or demo deployment: choose RunPod.

When people compare runpod vs lambda ai video, this is usually the real issue hiding under the surface. Not “which is best” in general, but “how expensive is a failed session in my workflow?”

How to Choose RunPod or Lambda for Your AI Video Project

Best pick for indie creators

If you are an indie creator testing generative video tools, RunPod is usually the first platform I would point you to. The reason is practical, not theoretical. There are already multiple resources built around RunPod plus ComfyUI plus video generation, and serverless scale-to-zero is perfect when your usage is inconsistent. You can prototype a graph, run a burst of jobs, then stop paying when you are not creating.

That setup is strong for hobby tests, exploratory content creation, and model experimentation. If you are comparing AnimateDiff workflows, trying a new open source transformer video model, or seeing whether a happyhorse 1.0 ai video generation model open source transformer is worth integrating, RunPod keeps the barrier low. You get flexibility without committing to always-on infrastructure.

The catch is reliability. If your process involves lots of retries anyway, that is usually acceptable. If your creative loop is mostly “test, refine, save, stop,” RunPod is a great fit.

Best pick for freelancers and client work

For freelance motion design, editing, or any client-facing production, Lambda is often the safer default. Research-backed positioning consistently frames Lambda as dependable compute with a more traditional cloud instance model. That matters when a delay costs you revision time, delivery confidence, or actual money.

If the workflow is settled and paid work depends on it, fewer surprises matter more than deployment flexibility. A stable machine for longer runs is simply easier to trust. That is why Lambda often fits freelancers who already know their stack and just need it to work repeatedly.

Use this scenario chooser:

- Hobby tests and casual experiments: RunPod

- Indie content creation with irregular sessions: RunPod

- Client delivery with deadlines: Lambda

- Model experimentation and ComfyUI graph building: RunPod

- Long-form rendering or overnight generation: Lambda

And use this checklist before deciding:

- Budget sensitivity: If you need strict pay-for-use efficiency, RunPod serverless is powerful.

- Uptime needs: If interruptions are costly, Lambda wins.

- Preferred interface: If you want configurable ComfyUI deployments, RunPod is very appealing.

- Job duration: Short burst jobs favor RunPod; long continuous jobs favor Lambda.

- Need serverless deployment: That points strongly to RunPod.

A useful tie-breaker is frequency. If your workflow runs a few times a week for short sessions, RunPod makes a lot of sense. If it runs daily for hours, Lambda usually starts to feel more natural. For many people, the best answer is actually both: RunPod for experimentation, Lambda for execution once the workflow is locked.

Recommended RunPod vs Lambda AI Video Workflows and Final Buying Tips

Best workflow patterns to start with

The cleanest way to start is to match platform to session shape instead of trying to crown one winner for everything.

RunPod serverless works best for ComfyUI-based demos, lightweight endpoints, and burst workloads. If you want to expose a video generation flow to a team, test prompt-driven clips on demand, or avoid paying for idle GPUs, serverless scale-to-zero is the standout feature. Northflank’s comparison specifically calls out RunPod for serverless AI workflows with zero cold starts, which is exactly the kind of behavior you want for sporadic inference.

RunPod Pods are the next step up for hands-on experimentation. Use a Pod when you want a flexible containerized environment, full control over dependencies, and the ability to tinker deeply with node graphs, checkpoints, motion modules, and custom repos. This is the sweet spot for ComfyUI-heavy exploratory work, especially when you are not yet sure whether the project should end up as text-to-video or image-to-video.

Lambda instances are the better starting point for long continuous generation runs. If your workflow already works and now needs uninterrupted GPU time for hours, Lambda’s traditional instance model is easier to plan around. This is where overnight clip generation, repeatable image-to-video production, and client-ready rendering pipelines fit best.

Mistakes to avoid before renting GPUs

The most common mistake is choosing a platform before deciding what kind of video workload you actually have. AnimateDiff and SVD are not interchangeable. AnimateDiff is text-to-video. SVD is image-to-video and cannot be controlled through text. If you skip that distinction, you will often optimize for the wrong thing.

The next mistake is ignoring billing behavior. RunPod per-second billing sounds efficient, but idle Pods still cost money. If you are absent-minded about shutting sessions down, those savings disappear. If you want the real cost advantage on RunPod, use serverless where possible or build a strict habit of tearing down Pods immediately.

Another expensive mistake is assuming every open model has the same control options or commercial rights. Before scaling a workflow around an open source ai video generation model, verify whether it is text-driven, image-driven, or hybrid, then verify the open source ai model license commercial use terms. That check matters more than small differences in GPU pricing if the project might become paid work.

A strong decision ladder looks like this:

- Need the cheapest-feeling path for sporadic ComfyUI video tests? Start with RunPod serverless or a short-lived Pod.

- Need a flexible sandbox for model and workflow experimentation? Choose RunPod.

- Need stable long sessions for render-heavy jobs? Choose Lambda.

- Need dependable compute for clients or deadlines? Choose Lambda first.

- Still unsure? Prototype on RunPod, then move proven workflows to Lambda if session length and reliability become the bottleneck.

For most creators, that approach beats over-researching. Start with the workflow pattern, not the brand. If your jobs are bursty, deployment-focused, and ComfyUI-driven, RunPod usually gives you more leverage. If your jobs are long, repetitive, and interruption-sensitive, Lambda is usually the better home.

Conclusion

The simple verdict is this: choose RunPod when you want flexible, serverless-friendly AI video experimentation, especially for ComfyUI deployments and bursty workflows. Choose Lambda when you want a more traditional GPU rental with steadier, dependable sessions for long renders, repeatable pipelines, and client-facing work.

If your week is full of testing prompts, swapping nodes, and turning GPUs off between ideas, RunPod is usually the sharper tool. If your week is full of all-night generation runs and deadlines where session stability matters more than deployment tricks, Lambda is usually the safer bet.

That is the real answer to runpod vs lambda ai video. Pick the one that matches how you actually generate video, not the one that sounds best on a comparison chart.